Hello everyone,

This is my talk in GECCO16 for our paper “

General Video Game Level Generation“.

Hello everyone, I am Ahmed Khalifa a PhD student at NYU Tandon’s school of engineering and today I am gonna present my paper General Video Game Level Generation

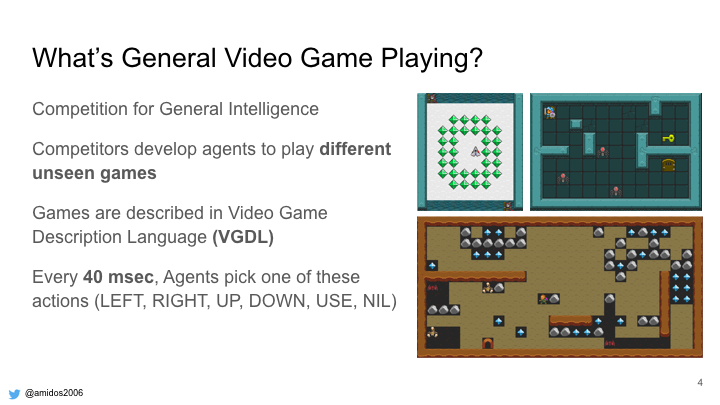

We want to design a framework that allows people to develop general level generator for any game described in video game description language.

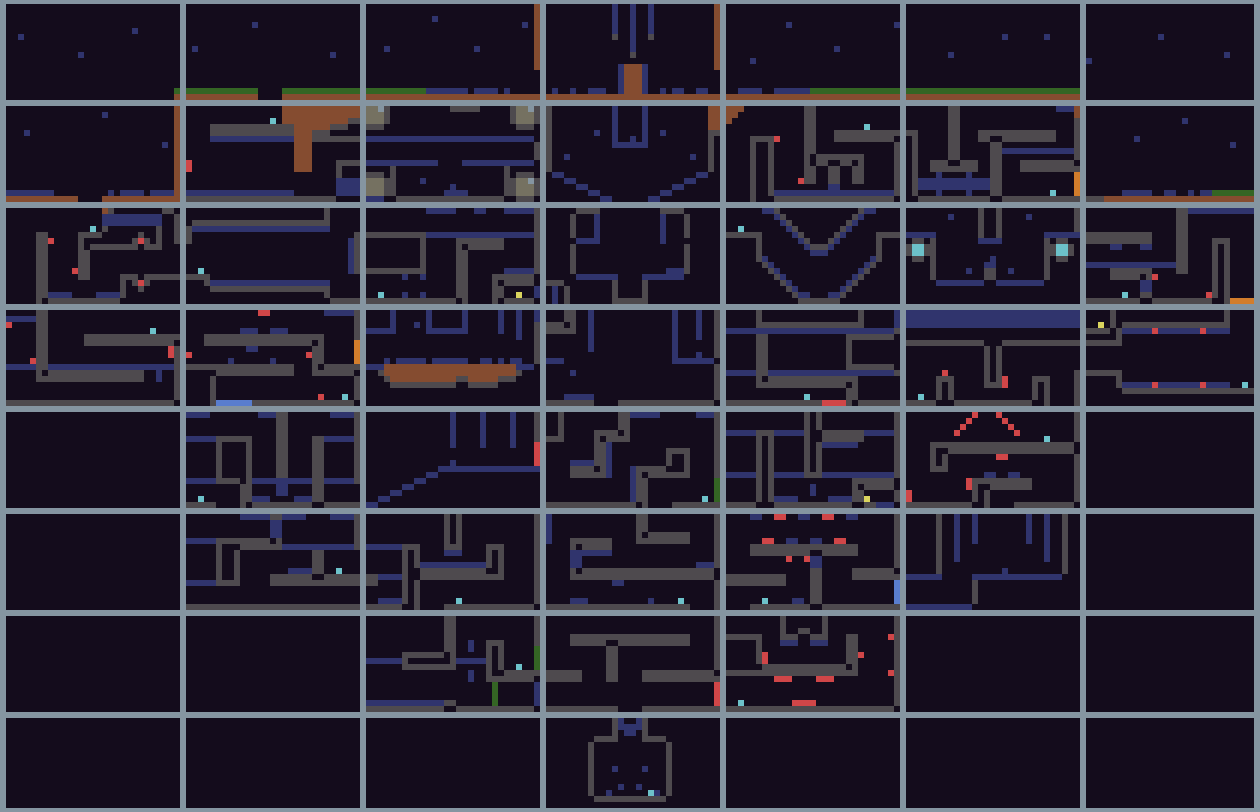

So what is level generation? It using computer algorithm to design levels for games. People in industry have been using it since very long time. At the beginning the reason due to technical difficulties but now to provide more content to user and it enables a new type of games. The problem with level generation is all the well known generators are designed for a specific game. So it depends on domain knowledge to make sure levels are as perfect as possible. Doing that for every new game seems a little exhaustive so we wanted to have on single algorithm that can be used on multiple games. In order to do that we need a way to describe the game for the generators.

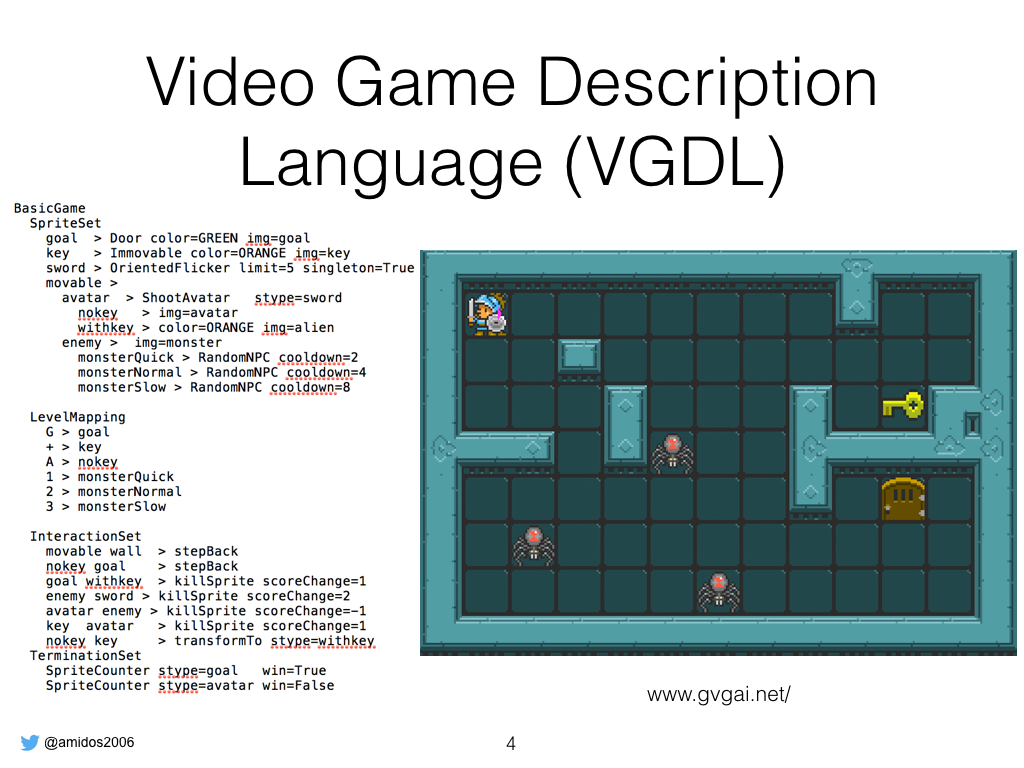

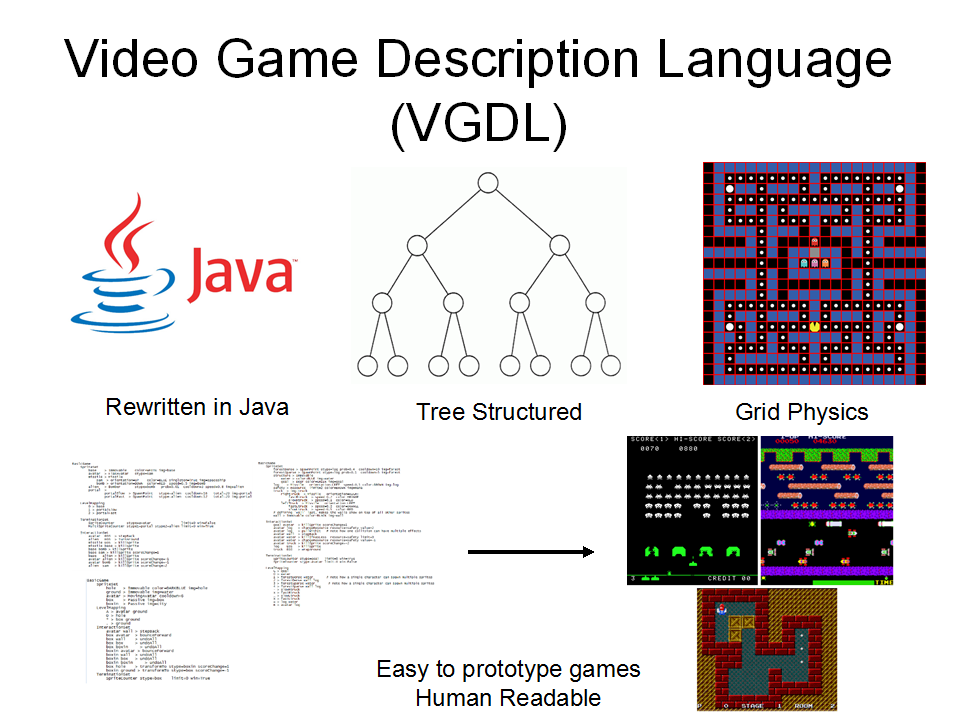

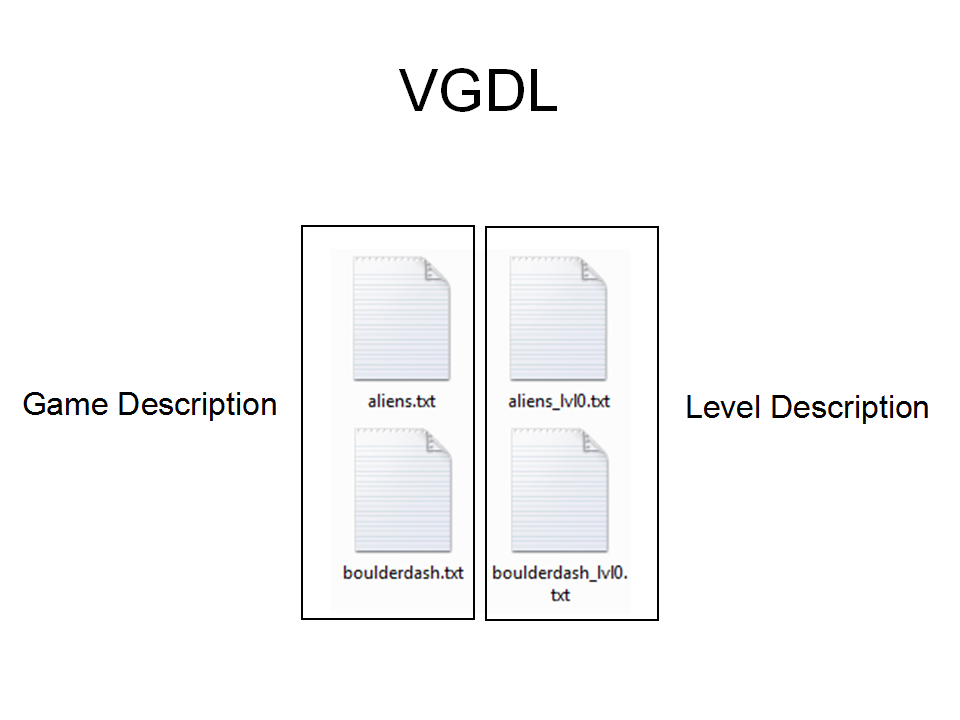

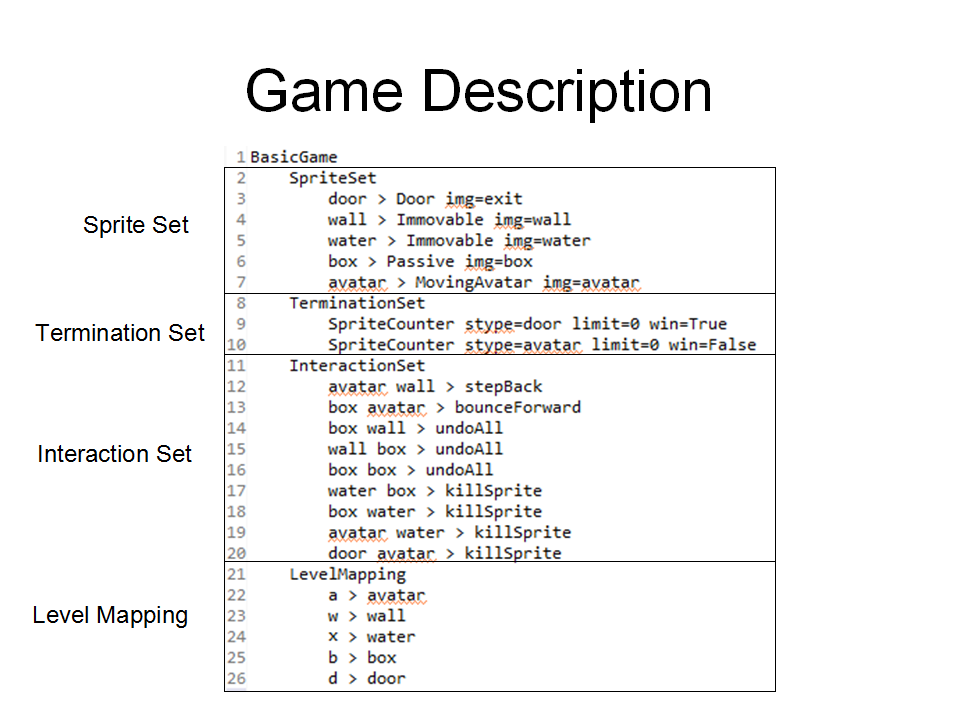

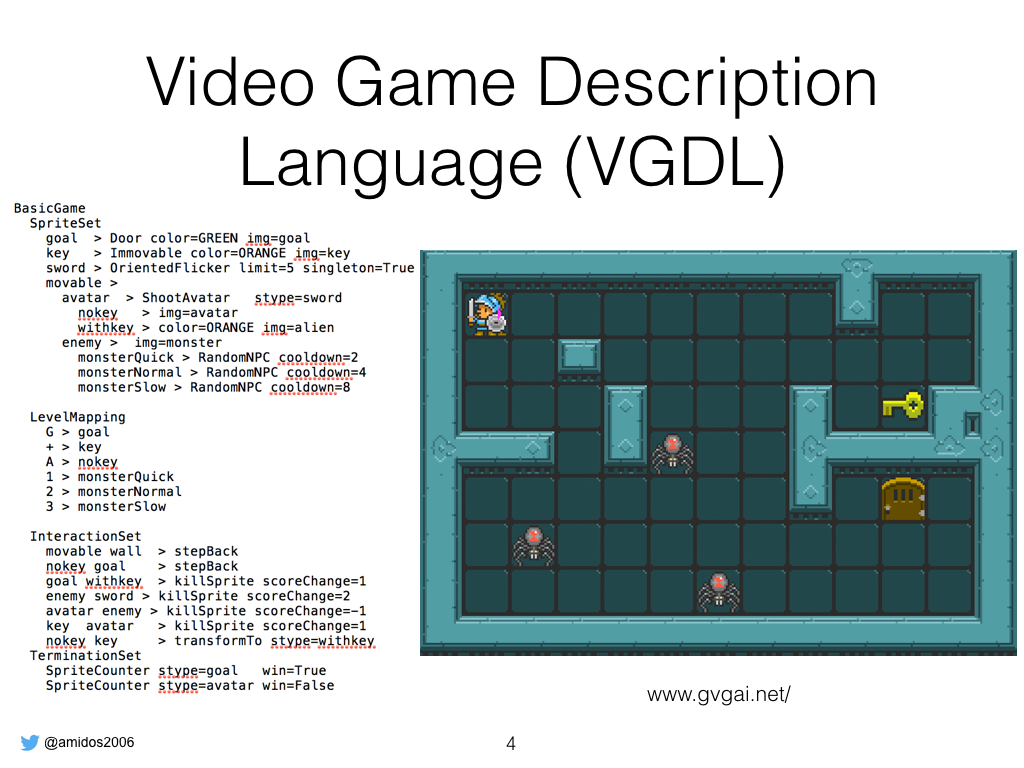

That’s why we are using Video Game Description Language. It’s a scripting language that enables creating different games super easily. So the script written is for that game which is kinda like dungeon system from legend of Zelda. VGDL is simple u need to define 4 sections, SpriteSet, LevelMapping, InteractiveSet, and TerminationSet. SpriteSet to define game objects such as goal, interactionset to define the interactions between different objects, termination set define how the game should end.

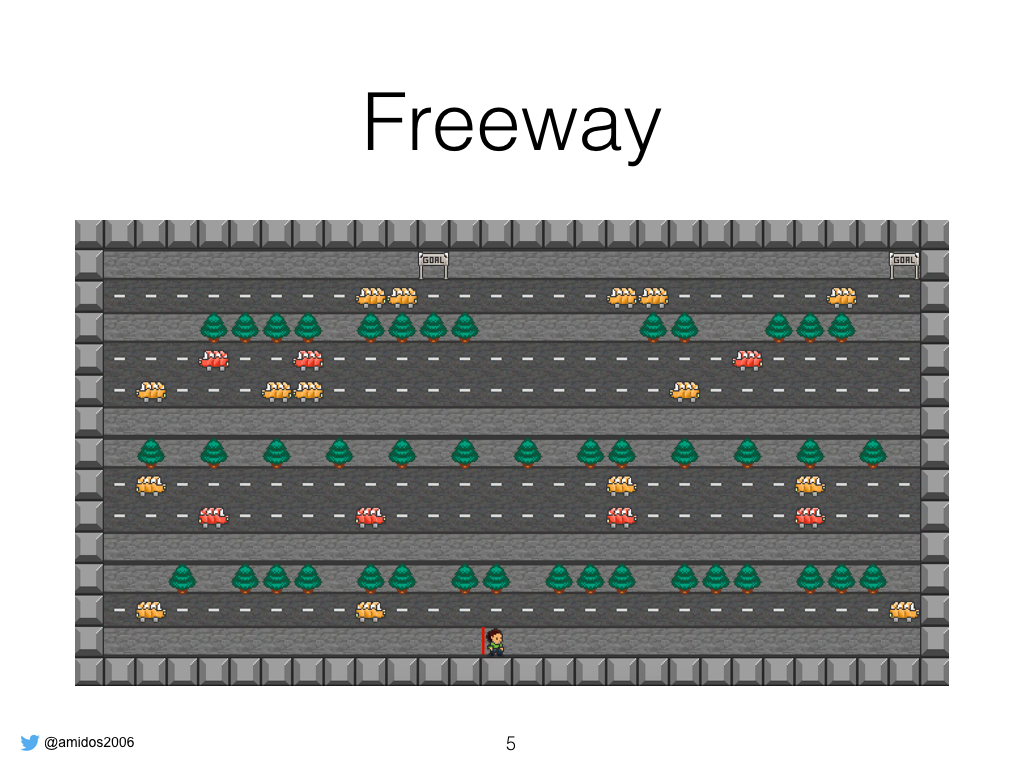

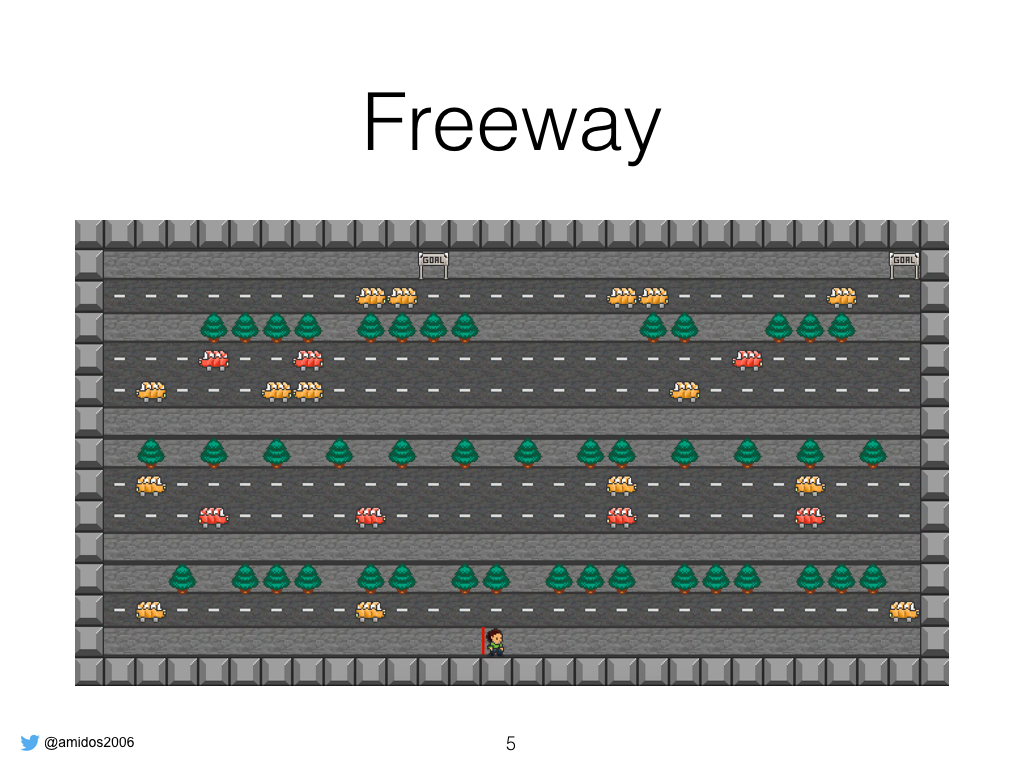

Here some extra examples that shows that vgdl is able to design different games such as freeway where the user try to reach the exit by crossing the street.

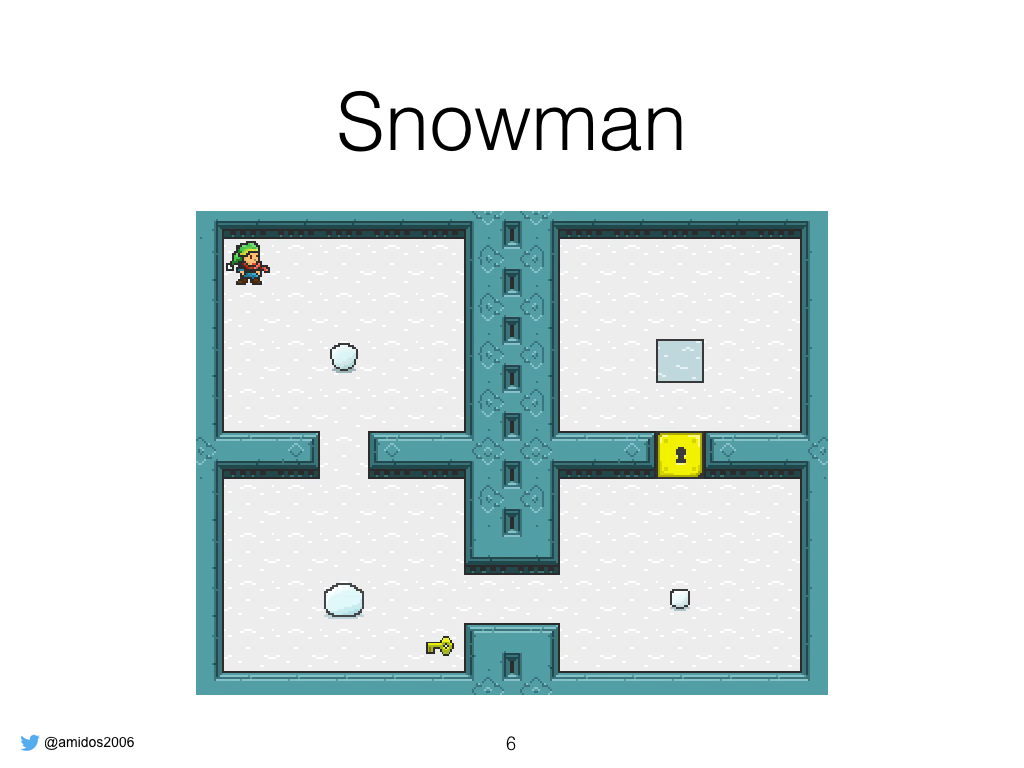

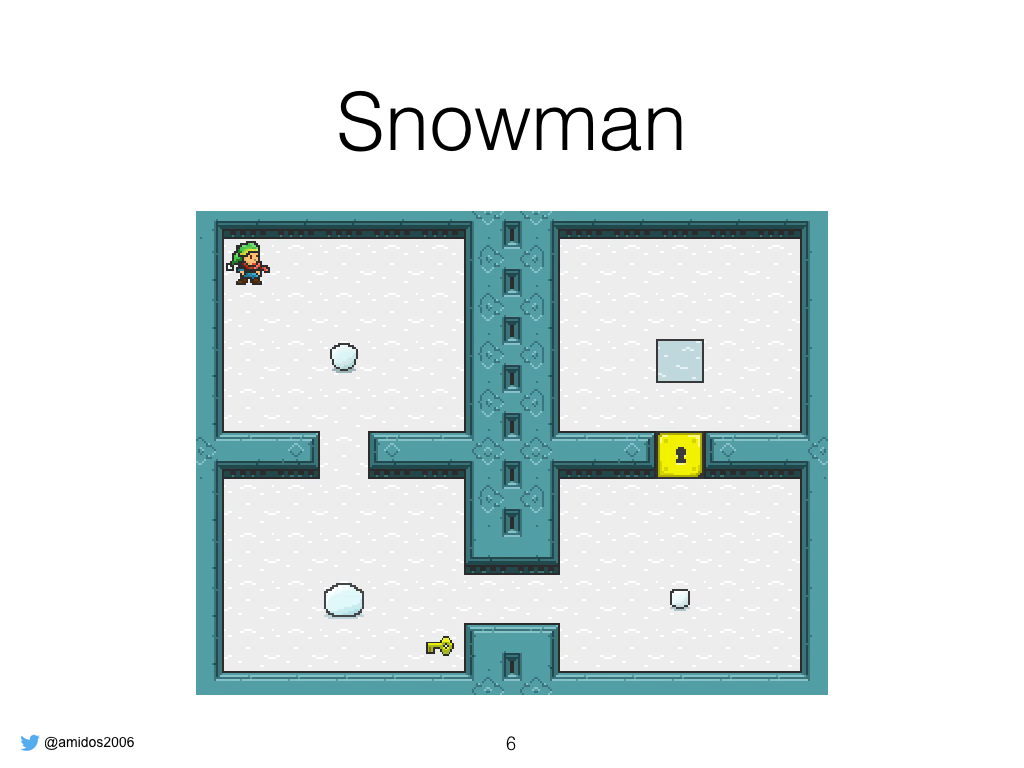

Snowman which is a puzzle game where the player try to build a snowman at the end position by placing the peices correctly. It’s inspired by a snowman is hard to build a famous Indiegame about creating snowmen and giving them names and hugging them.

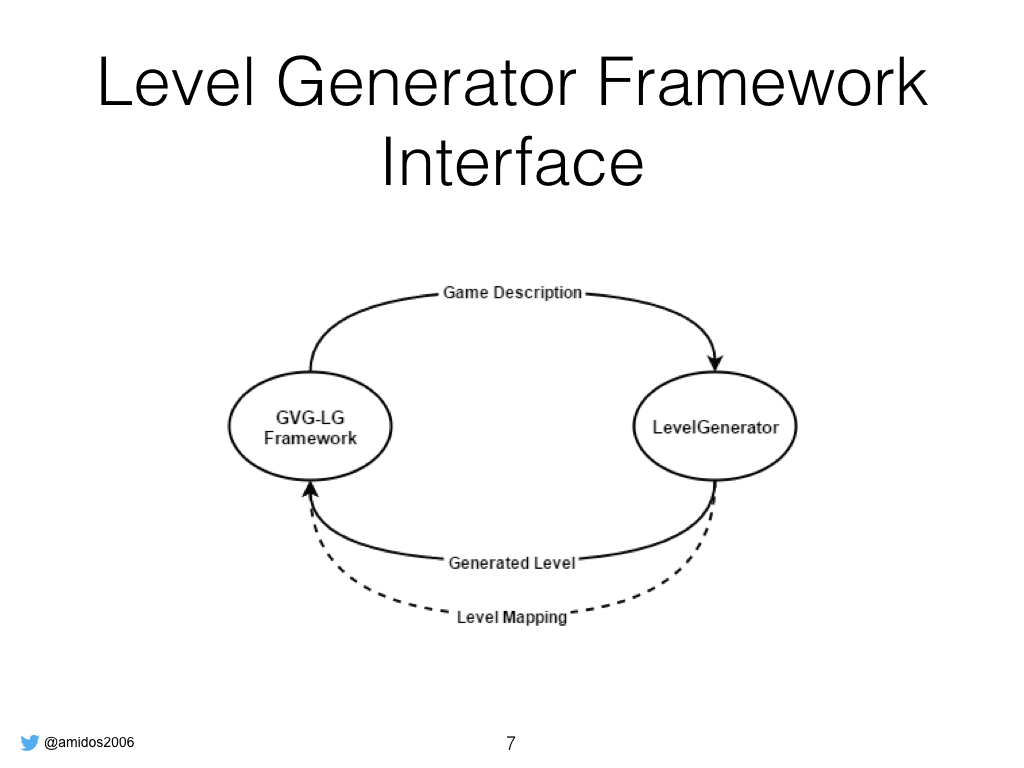

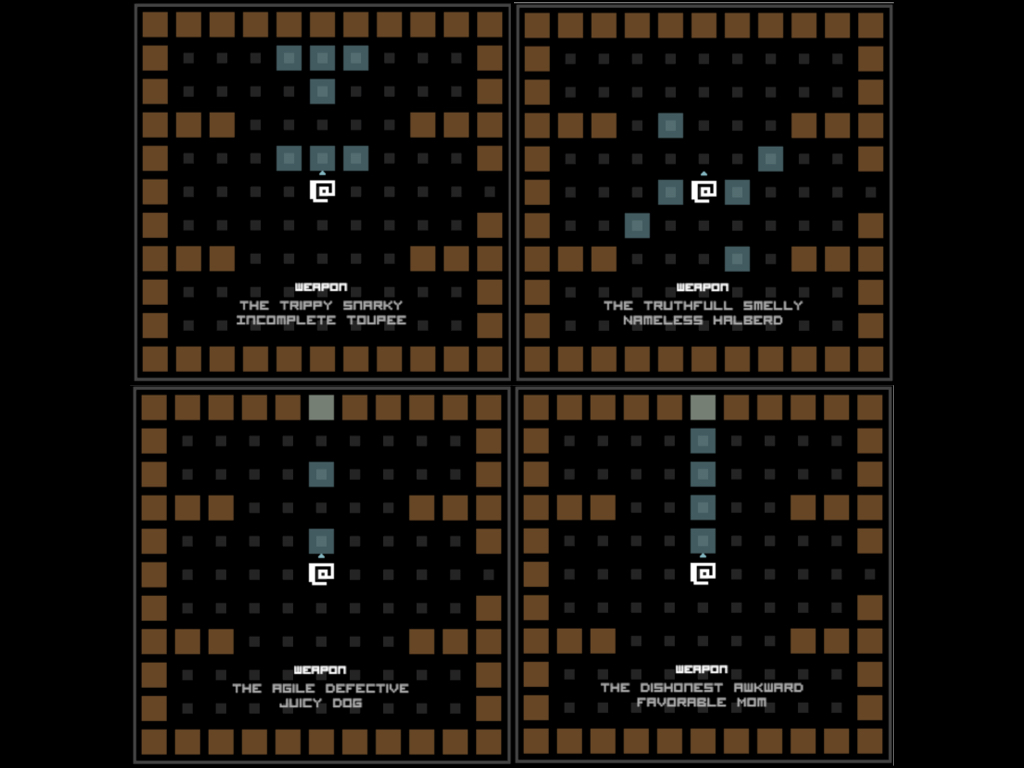

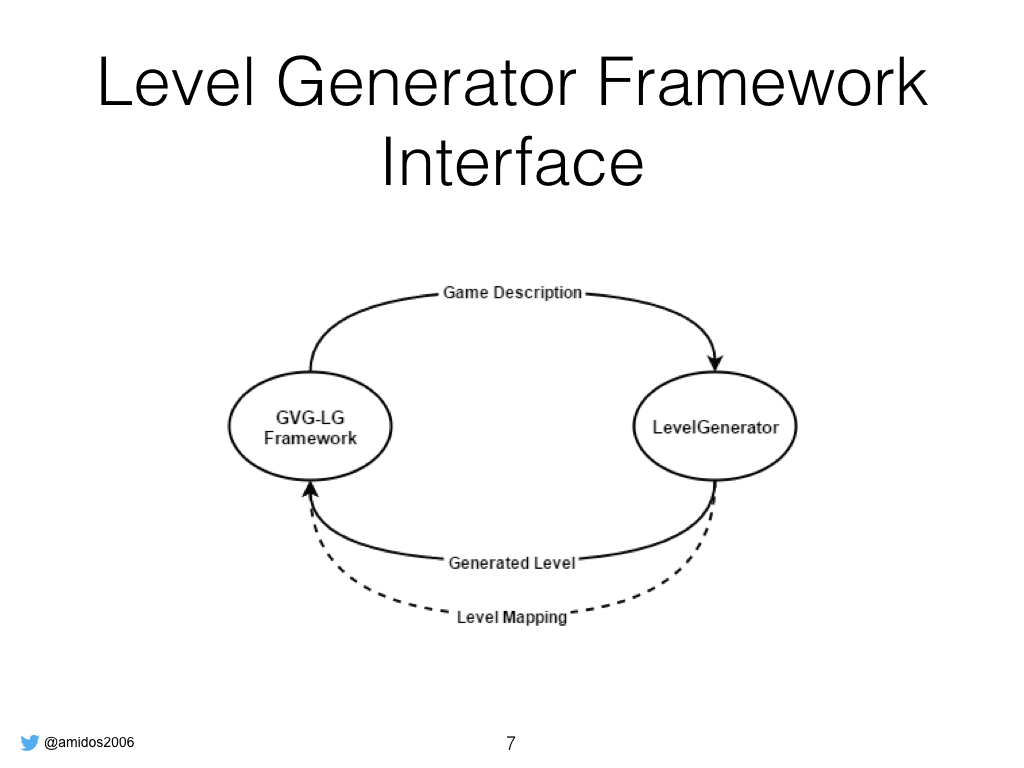

Let’s get back to the framework, we want to create it as simple as possible so each user write a level generator where he gets a vgdl description of the game and he has to return a generated level

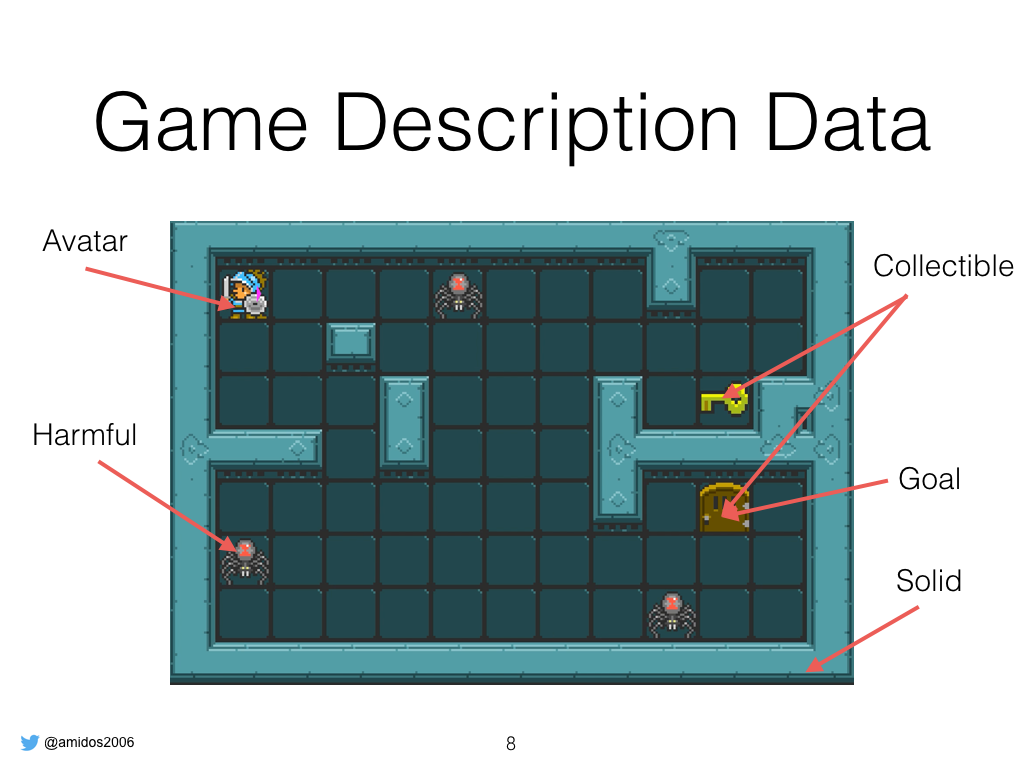

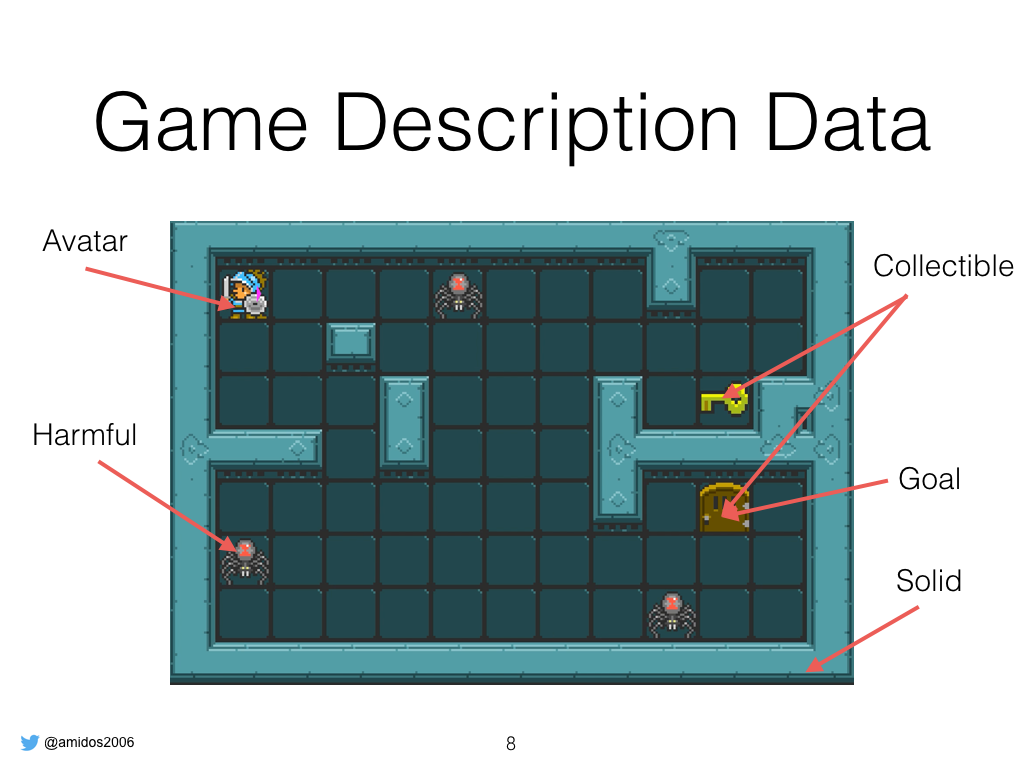

The game description object give the user all the information in vgdl and also give tags to the sprites according to their interaction. For example if object block the player movement and don’t react with anything else, is considered solid, if is the player then tagged as avatar, if it could kill the player then it’s a harmful object, collectible object is stuff that get destroyed

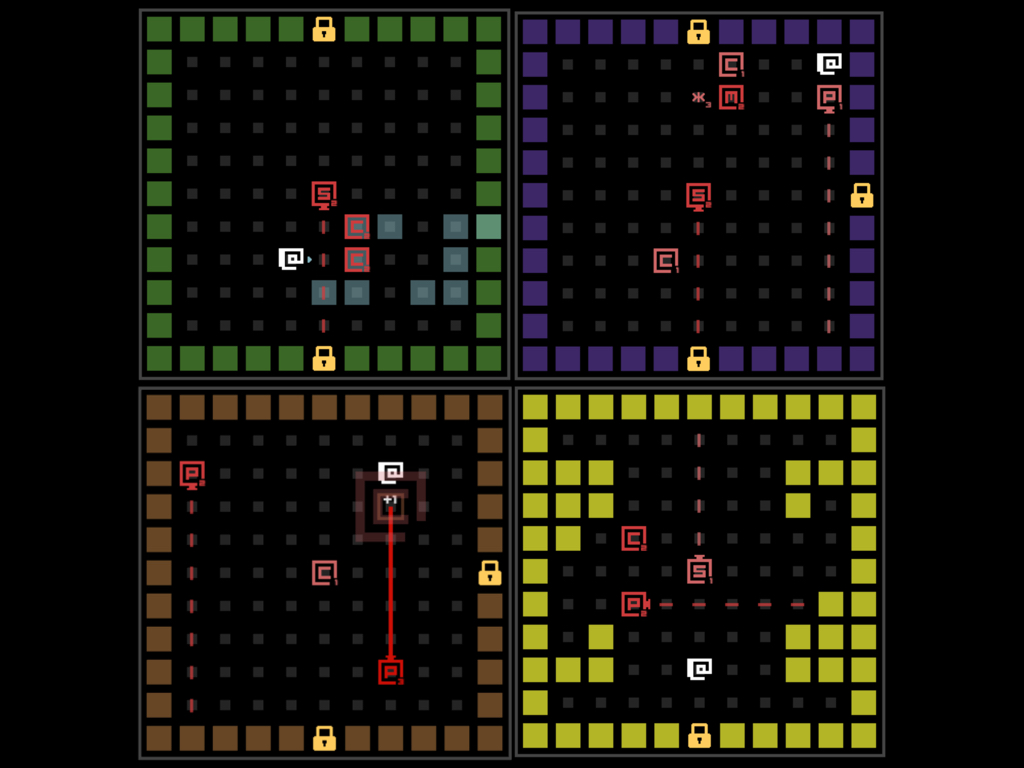

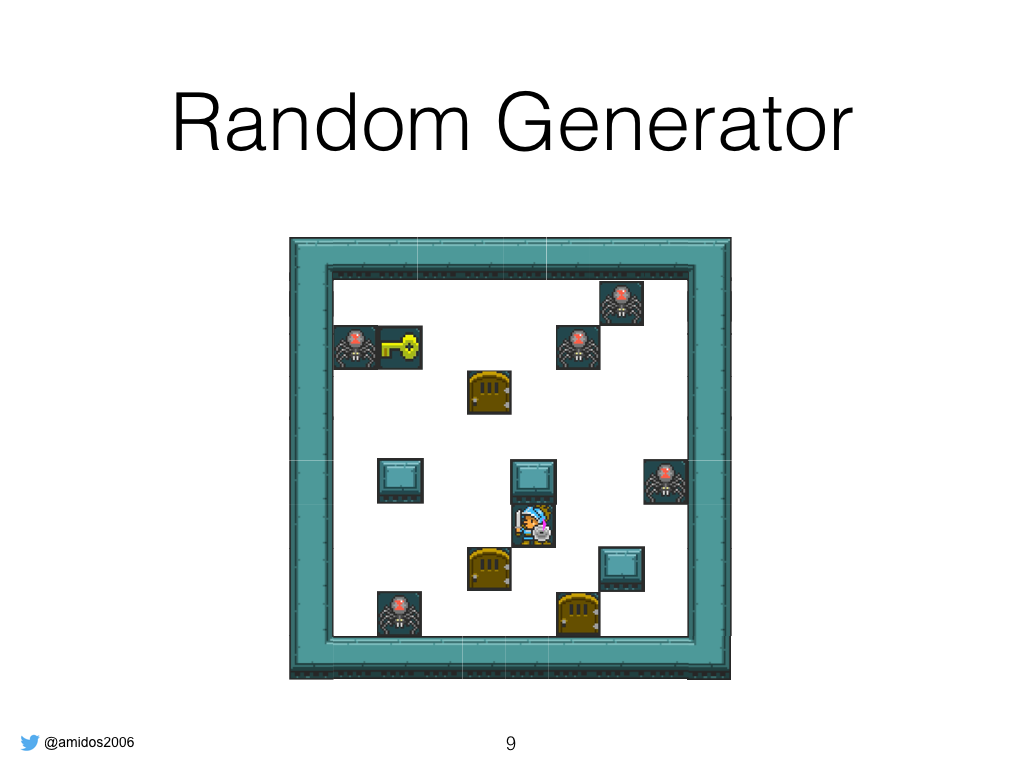

We designed 3 different level generations and shipped them with the framework, the first one is the random generator where it place all game objects in different location on the map

The second one is the constructive generator where it tries to use information available and design a good level. So it starts by calculate percentages for each object type then it draws a level layout, then place the avatar at random location then place harmful object in a position proportional to the distance to the avatar, then add goal objects. After that it goes through a post processing where it add more goal objects if needed to satisfy goal constraints.

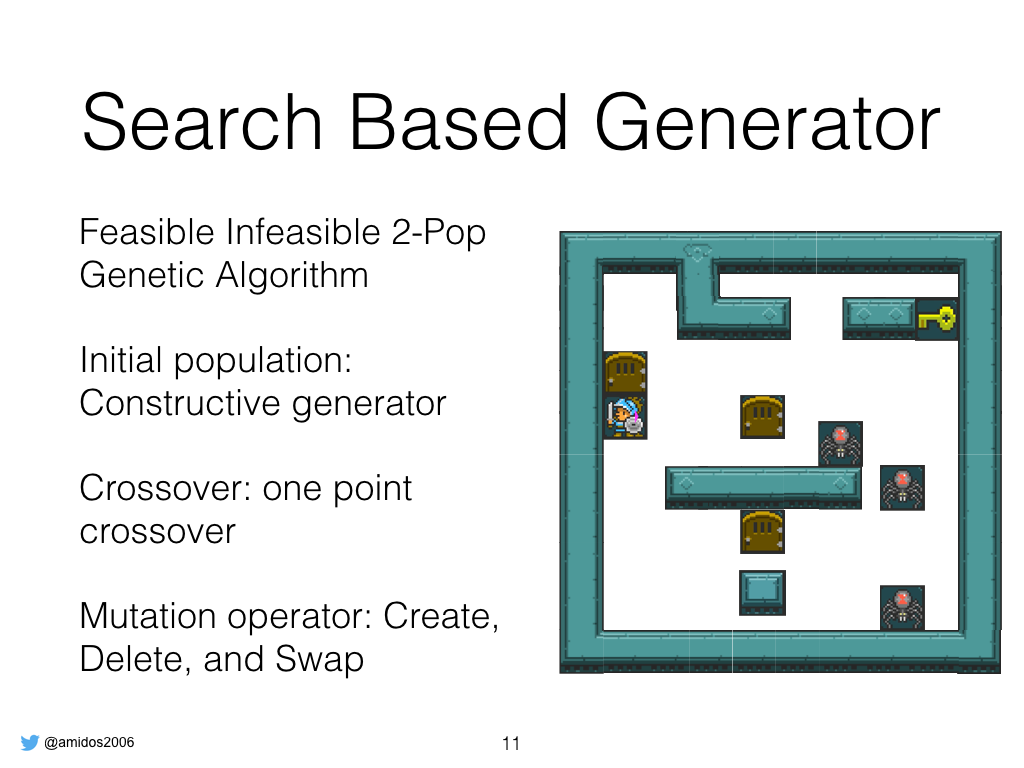

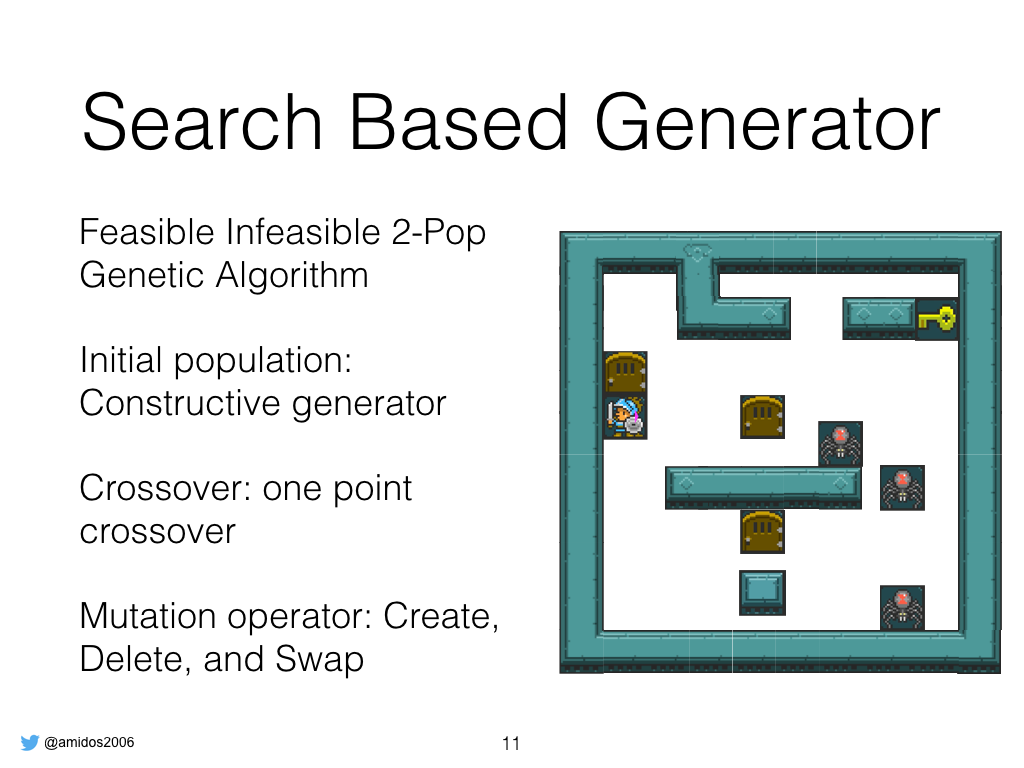

The third one is a search based generator where it uses feasible infeasible 2 population genetic algorithm to generate the level. We populated with levels generated from the constructive algorithm to ensure that GA will find better levels. We use one point crossover where two levels are swapped around certain point. While mutations used is create where it creates random object at random tile, delete: deletes all objects from a random position,

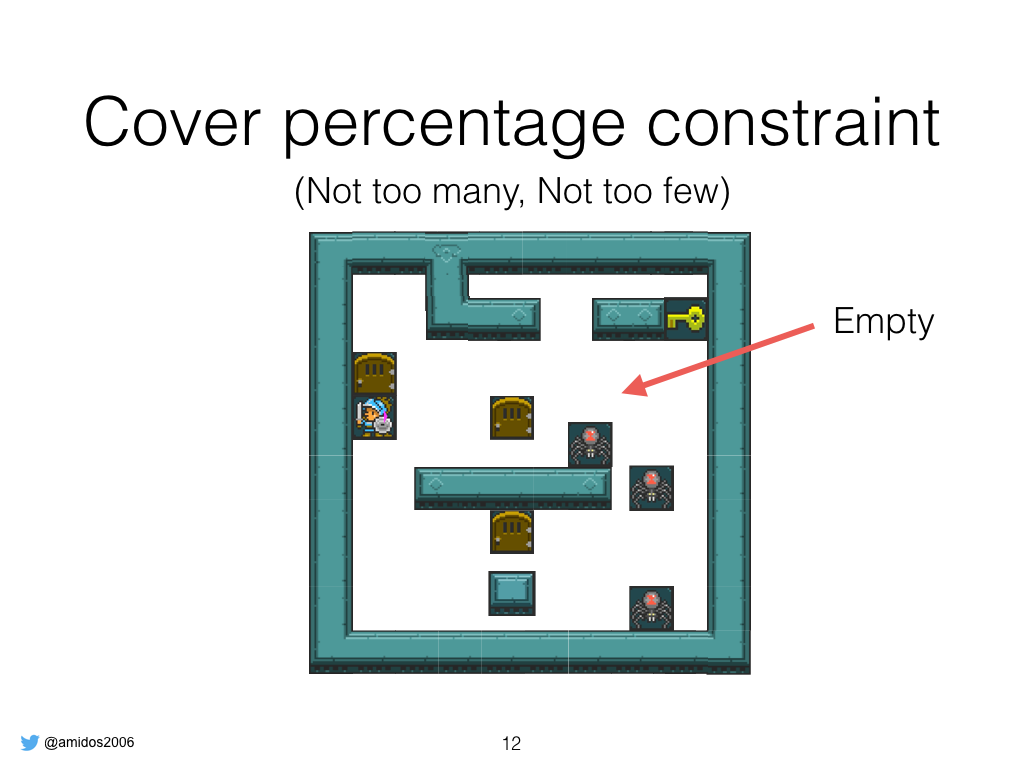

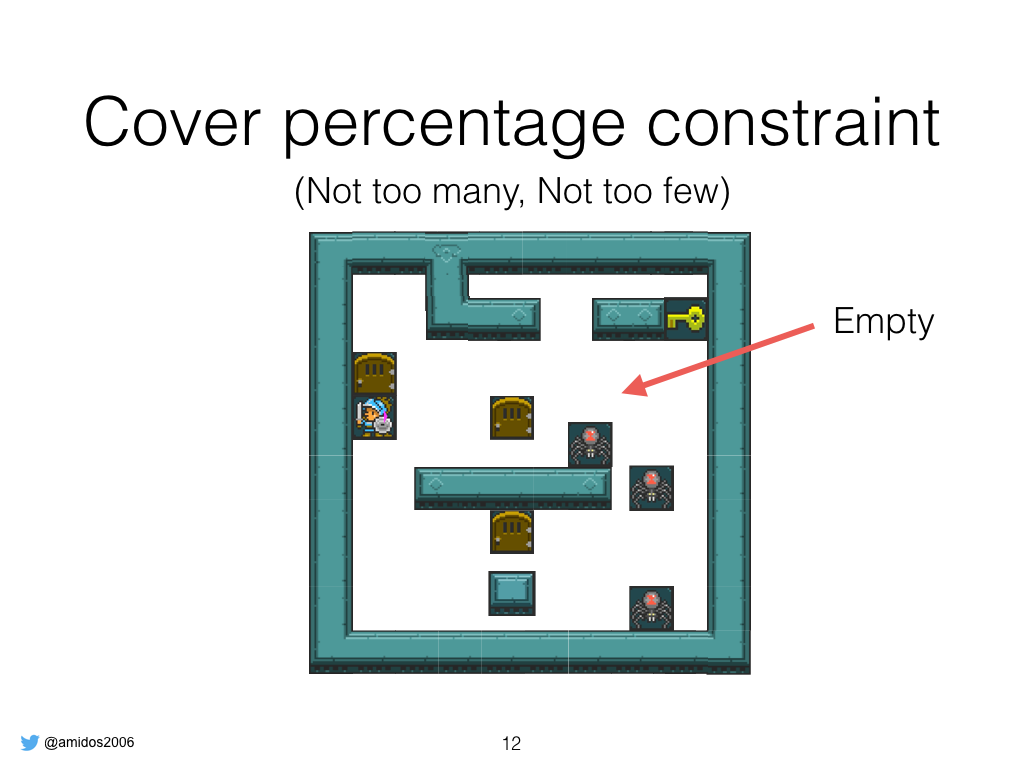

We used several constraints to ensure playability of the levels. First one is cover percentage so we never generate an over populated levels or very sparse levels

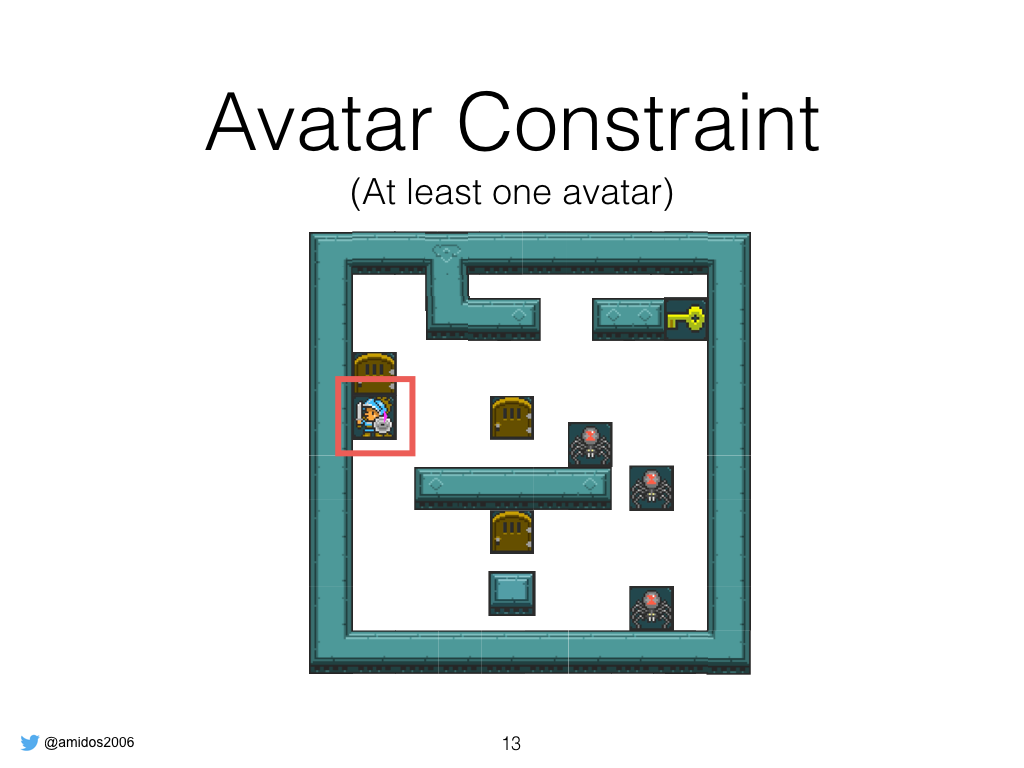

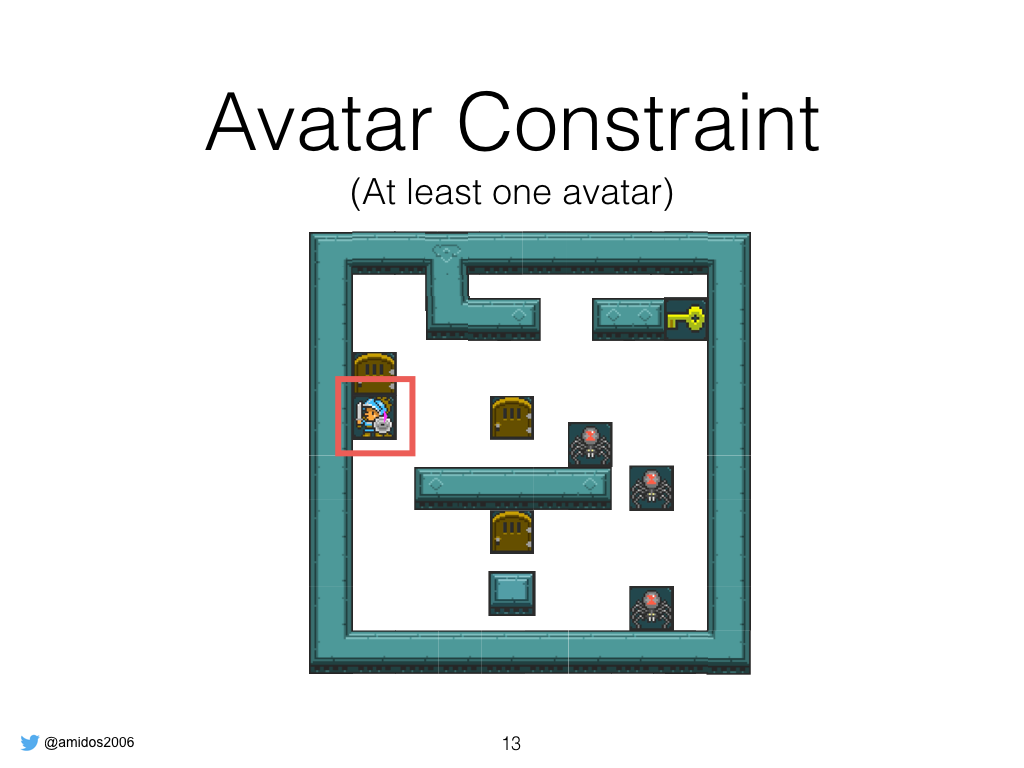

Make sure there is one avatar becz if it’s not there it’s impossible to play the game

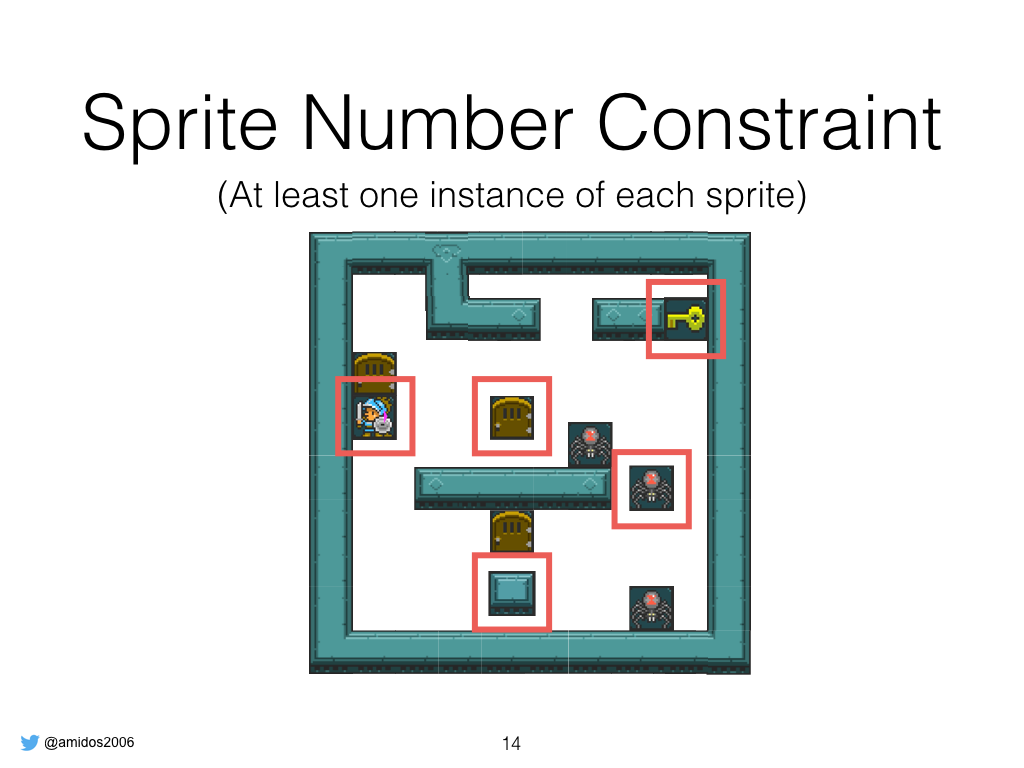

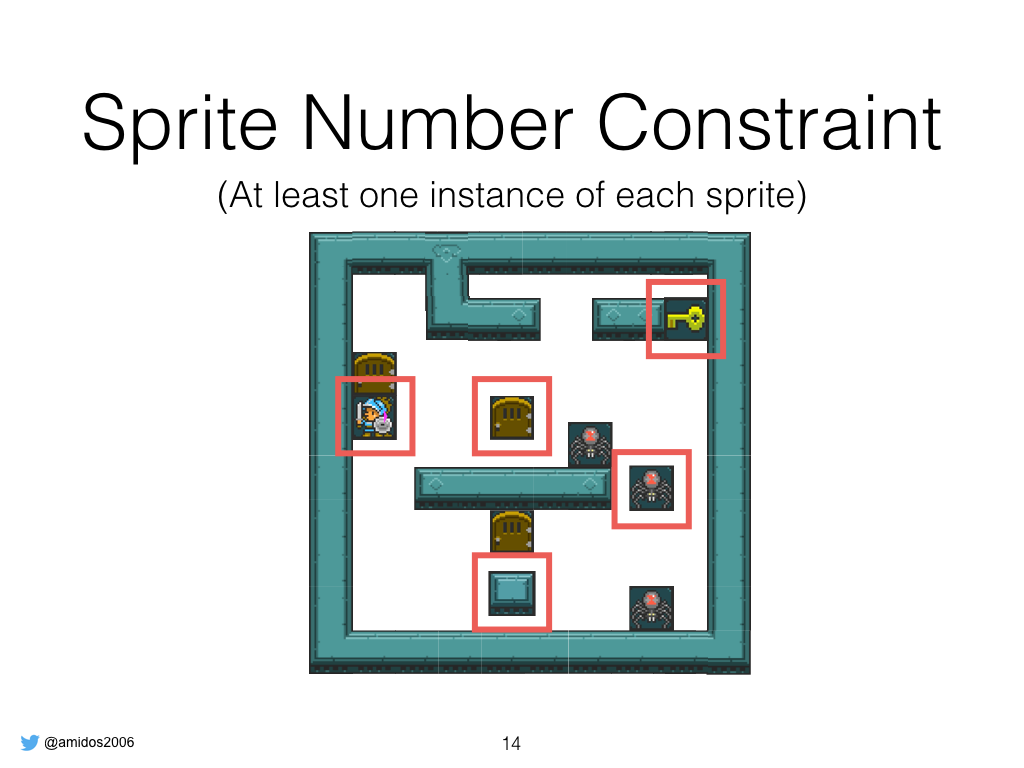

Sprite number constraint where it makes sure there is at least one sprite from each type, to make sure the levels are playable. For example if there is no key u can’t win the level

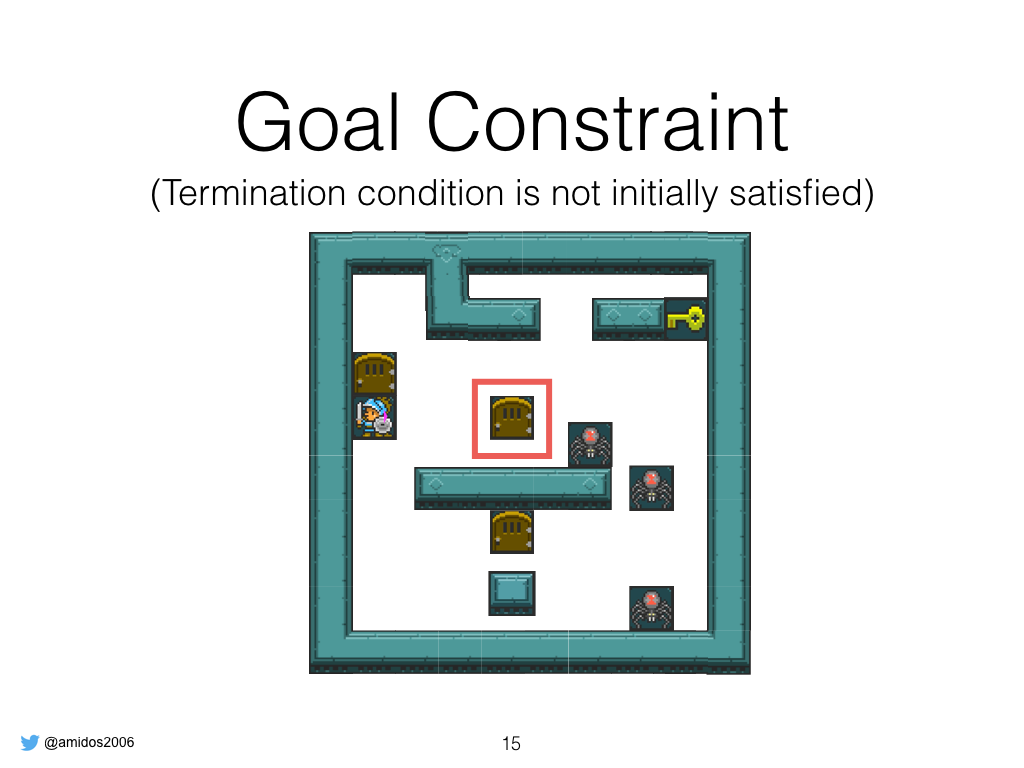

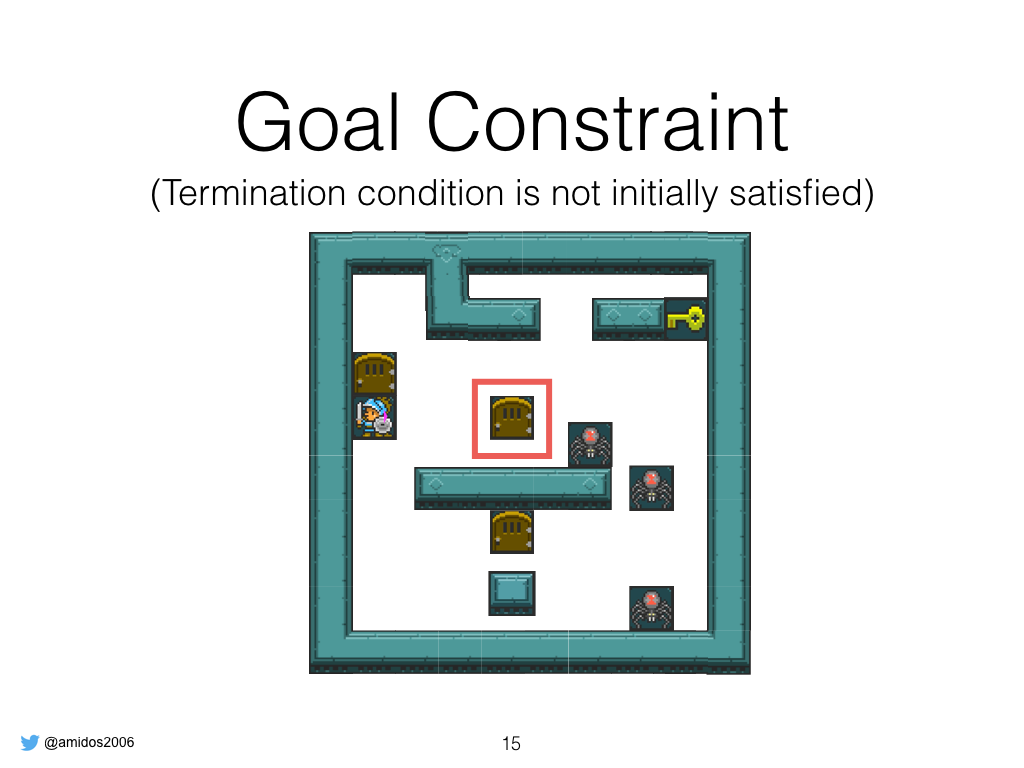

Goal Constraint to make sure that all goal conditions are not satisfied becz if they are satisfied then u win when the game starts

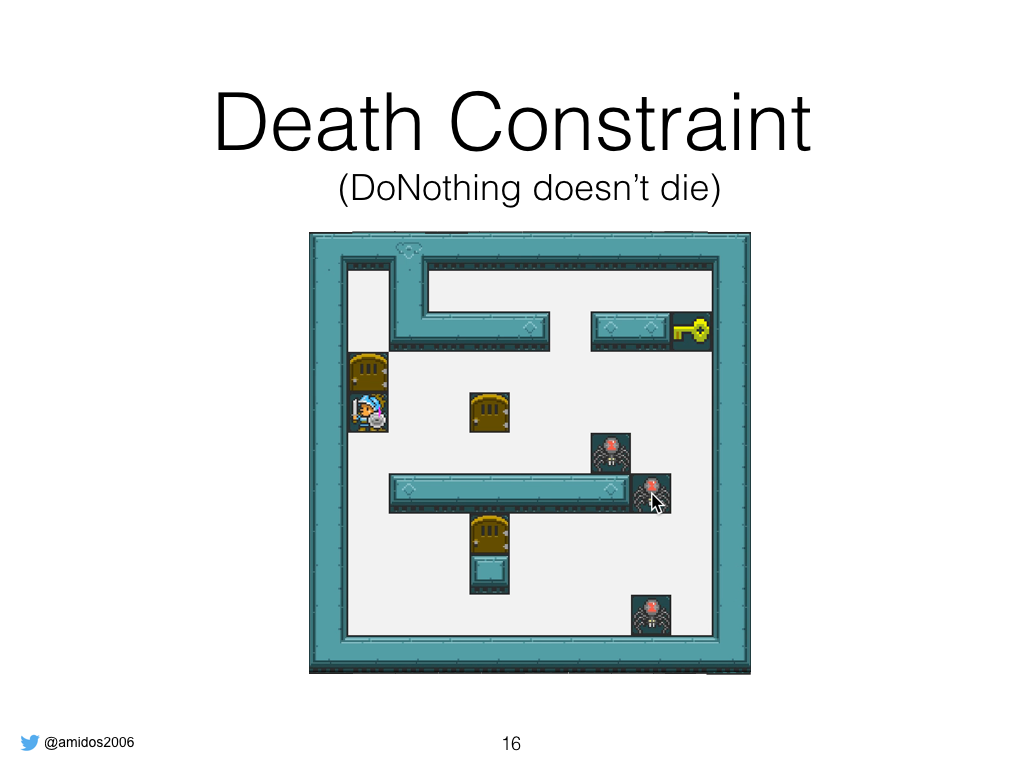

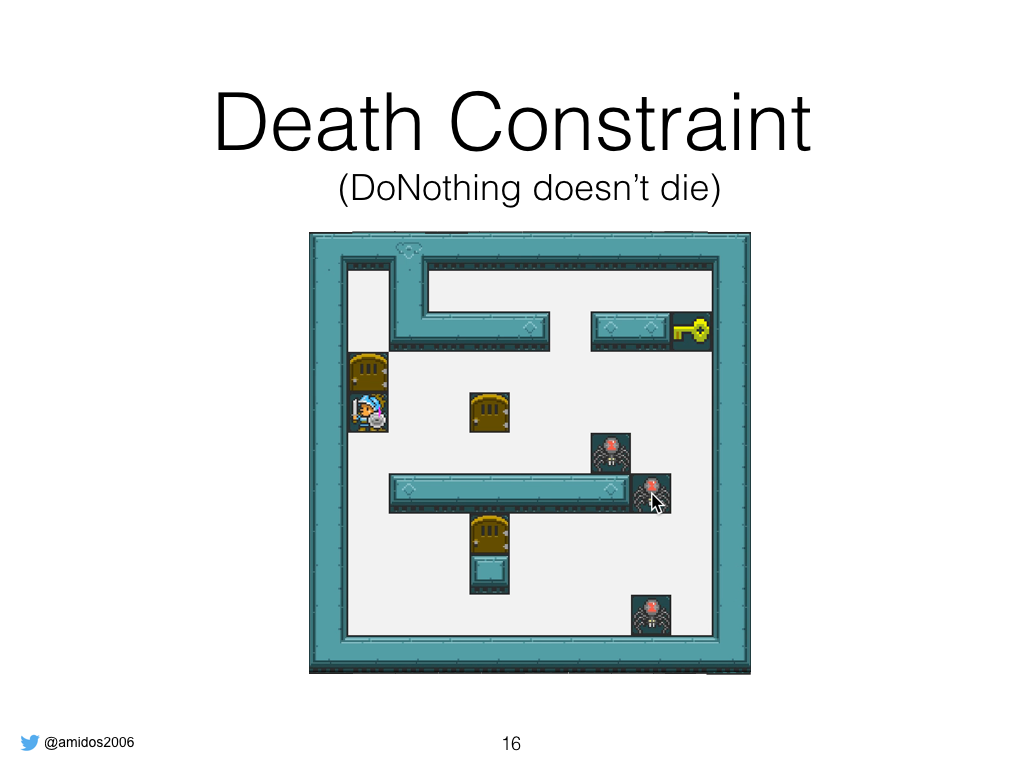

Death constraint is to make sure for the 40 game steps if the player did nothing he won’t die becz if the player dies at the beginning it’s unplayable game becz humans are not as fast as expected

Last one is win constraint and solution length is make sure the levels are winnable and not straight forward win, the best agent takes amount of steps before finishing.

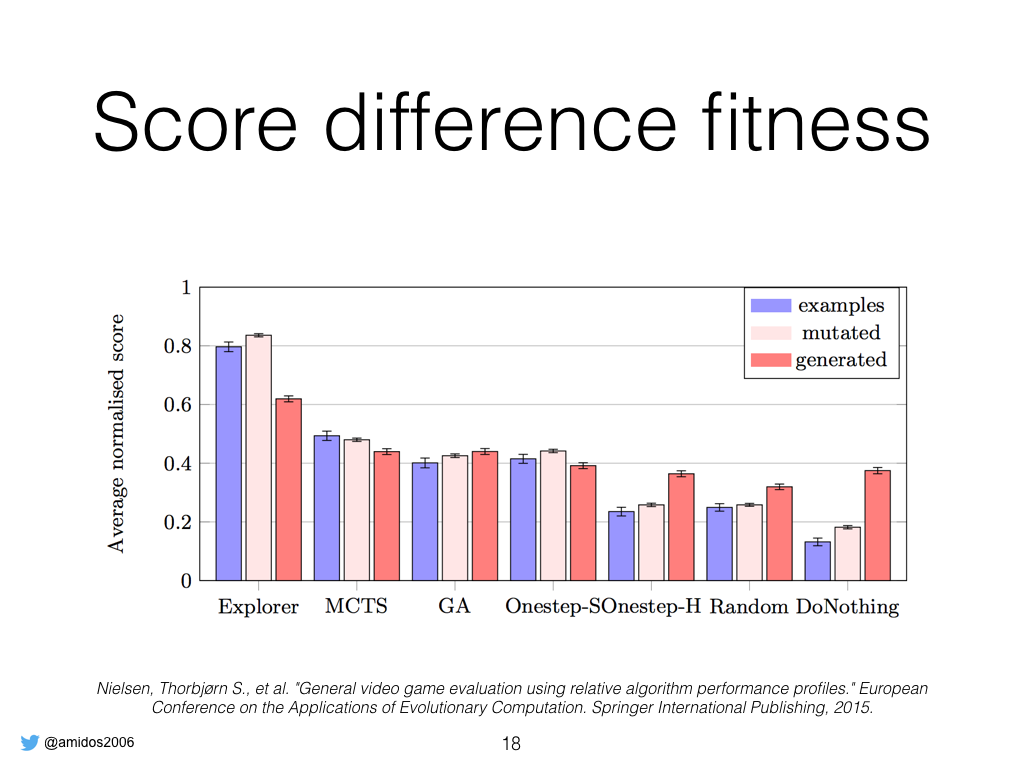

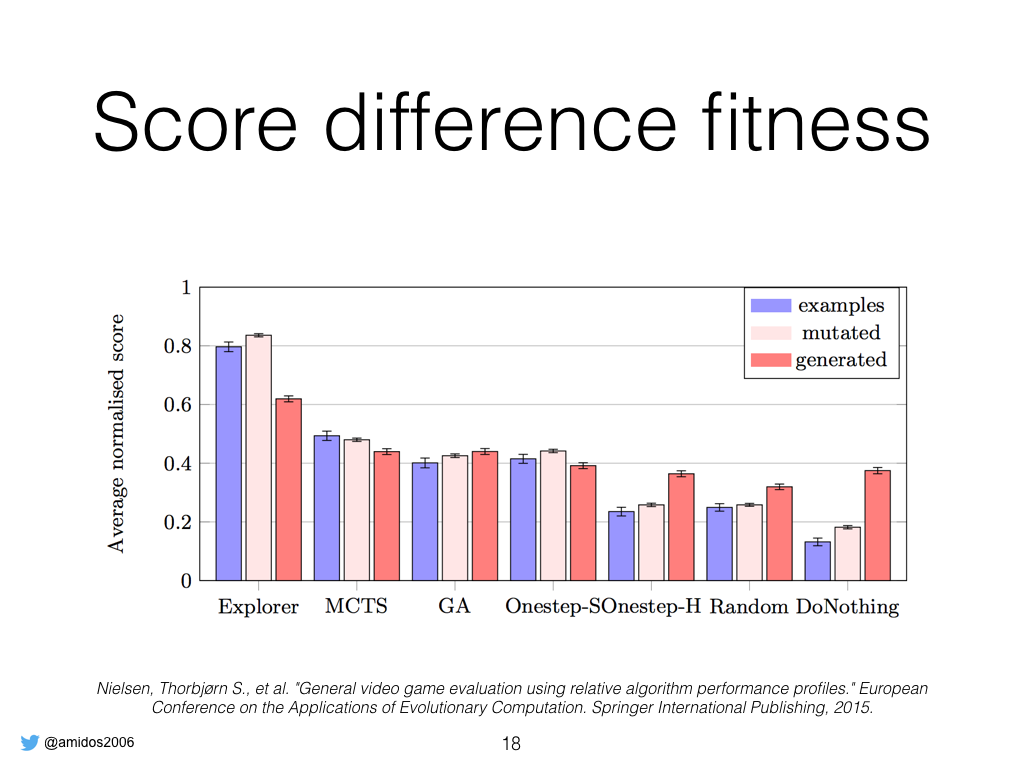

These are all the constraints, about the fitness, it consists of two parts. The first part is score difference fitness inspired from Nielsen work. He found that the difference in score between the best agent (Explorer) and his worst agent (DoNothing) is big in good designed levels compared to random generated ones. We used the difference between our best agent and worst agent for that.

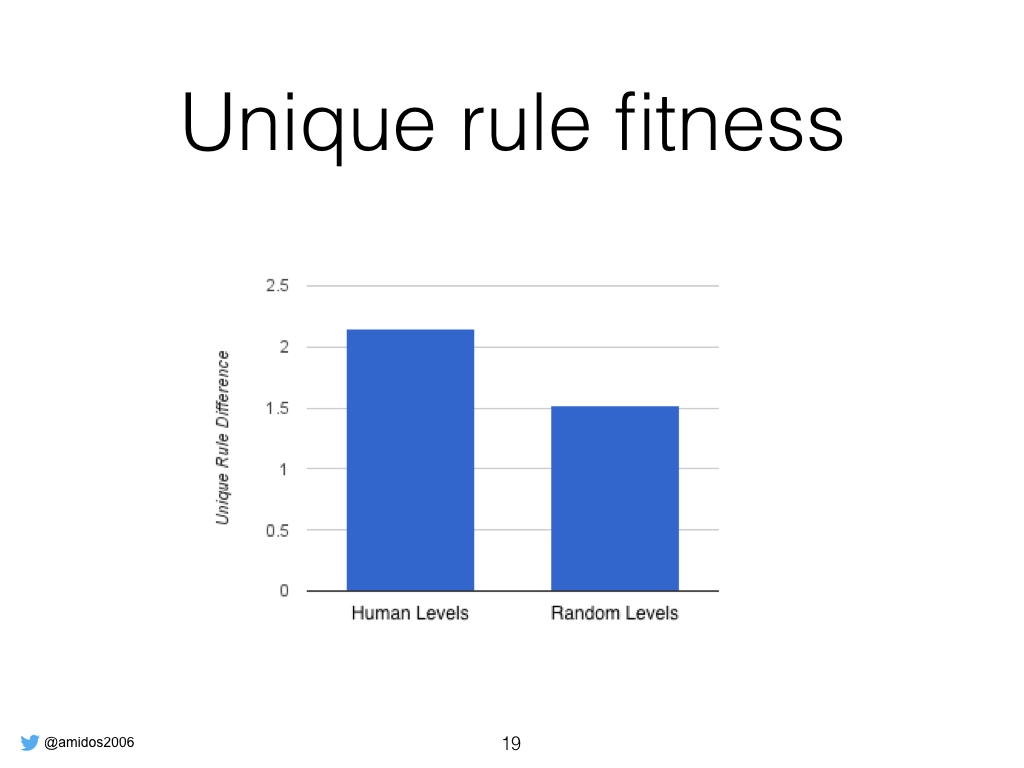

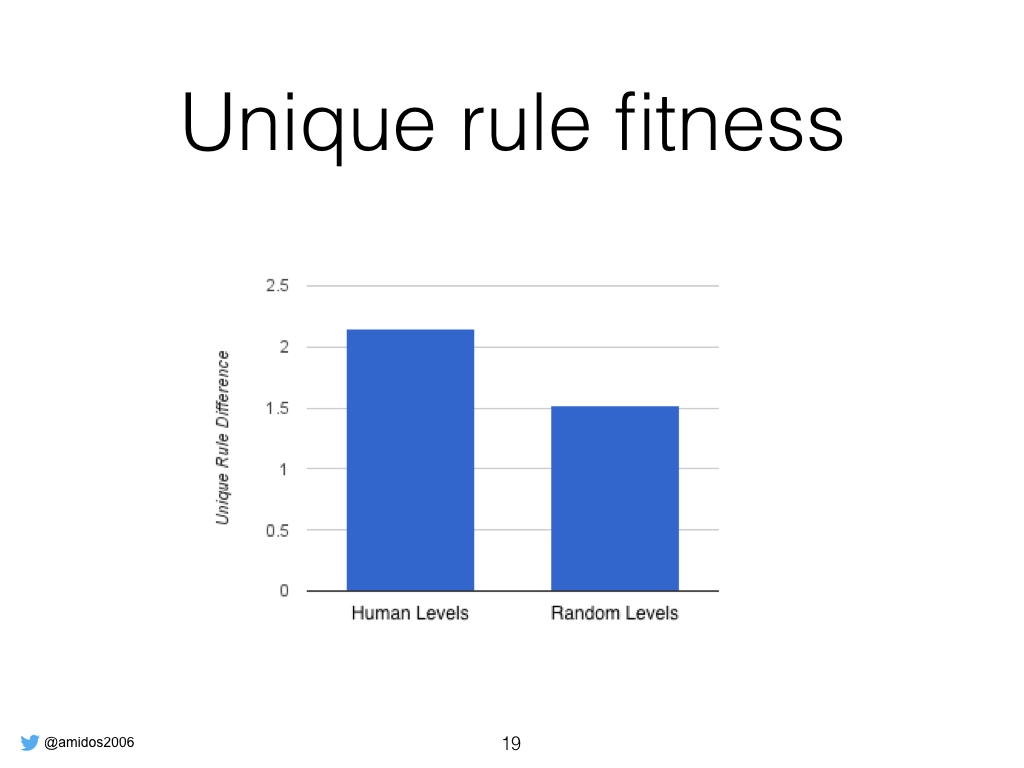

The second fitness is unique rule fitness, we noticed by analyzing all games that agents playing good designed levels encounter more unique intersection rules than random designed levels.

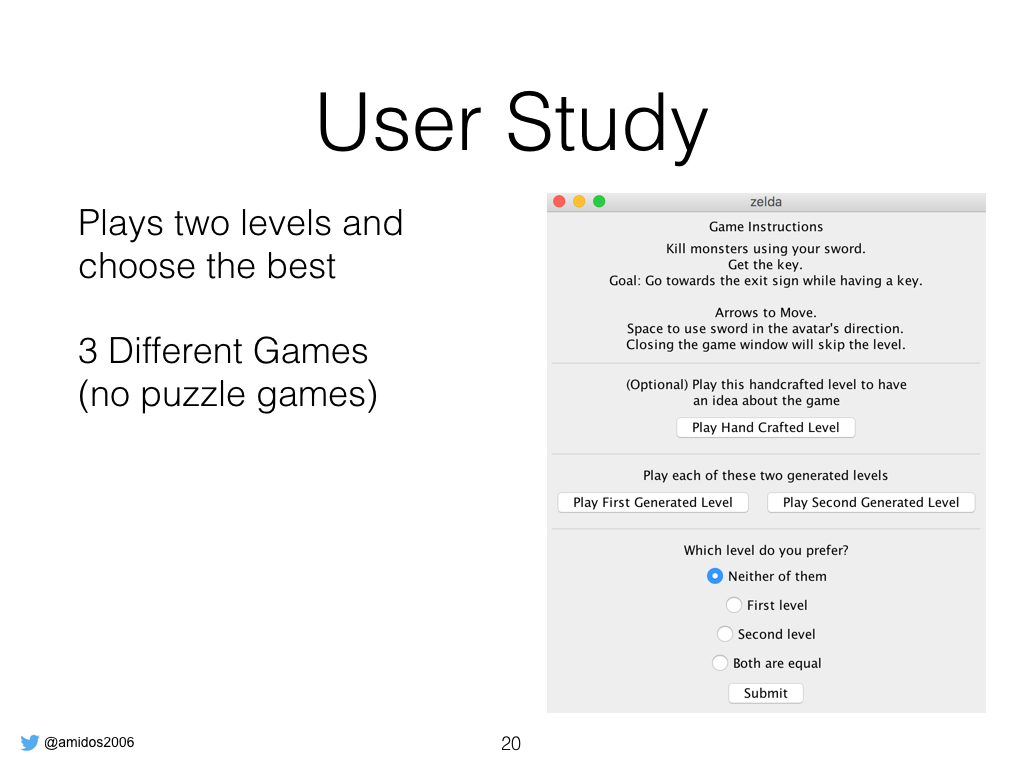

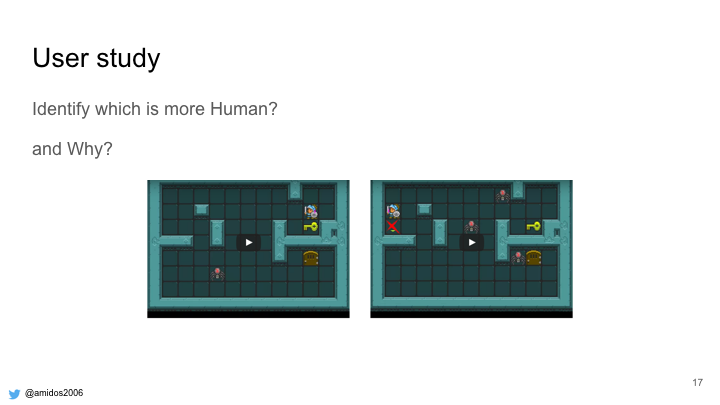

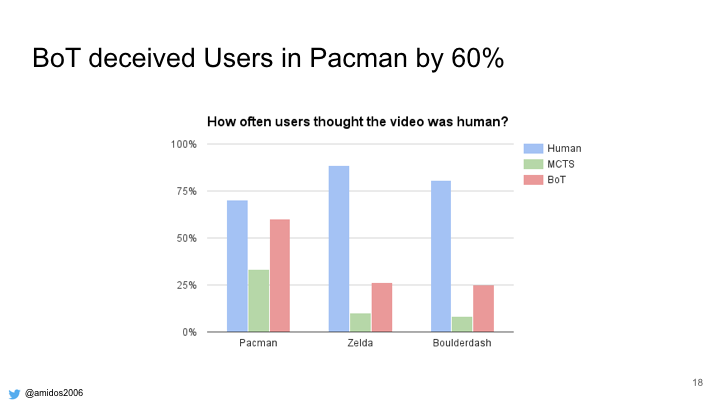

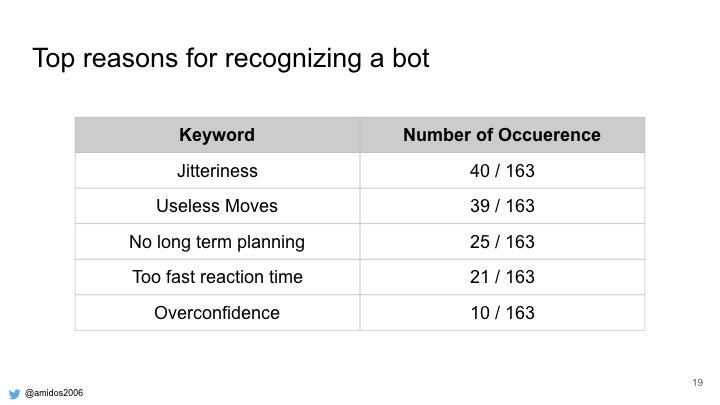

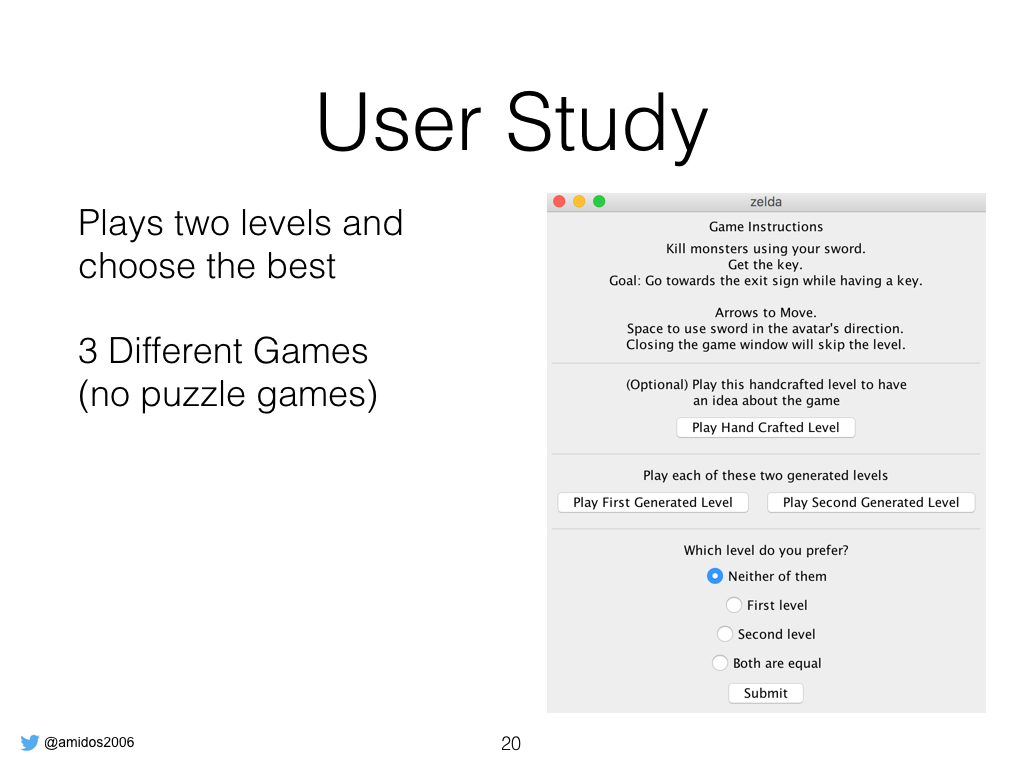

In order to verify that we did a user study, where the user plays two levels from a certain game and choose which is better. We used 3 different games without including puzzle games as there is no agent excels in playing puzzle games so far.

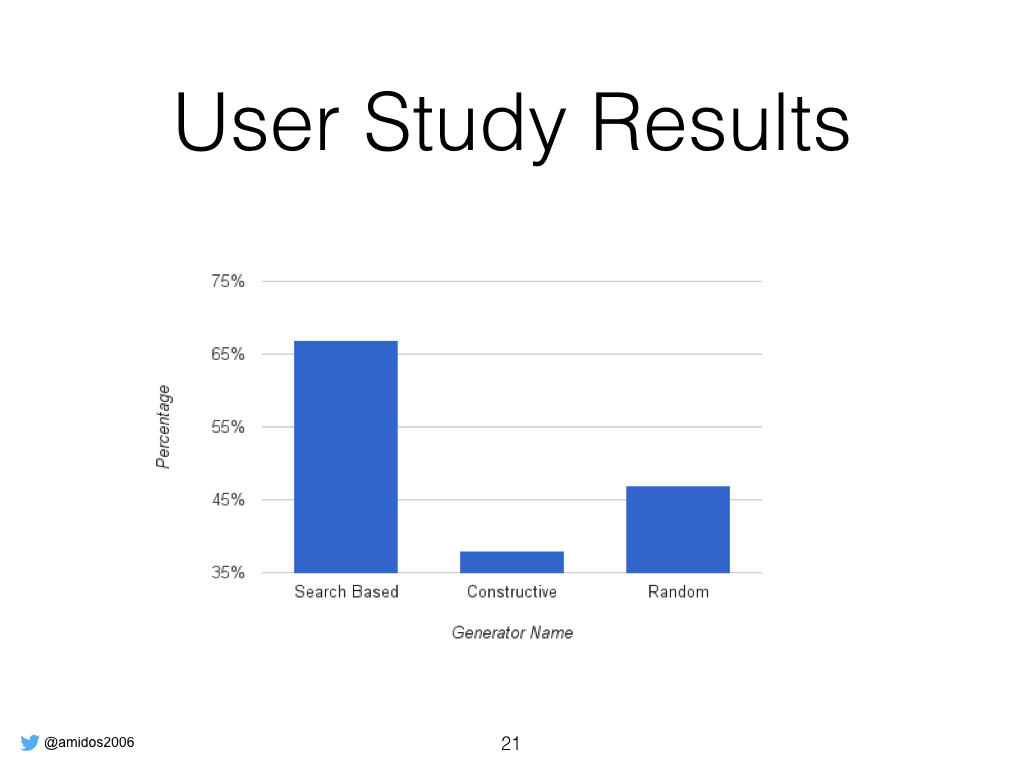

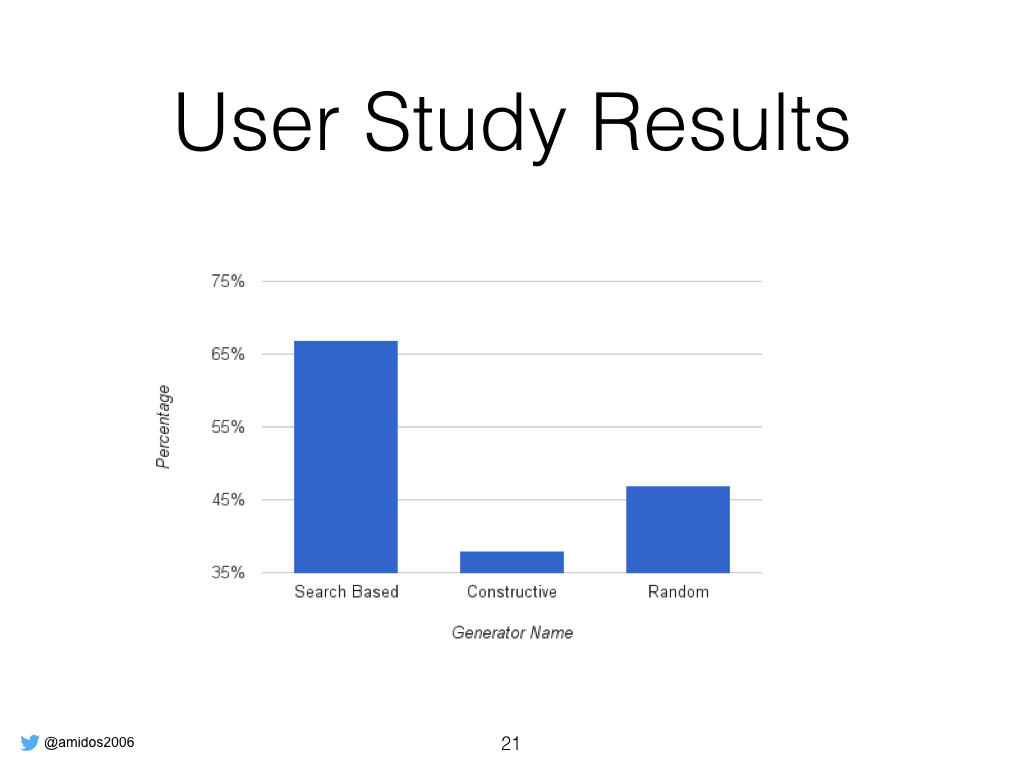

The results shows that users prefered search based levels over constructive which was expected while they prefered random over constructive without huge significance.

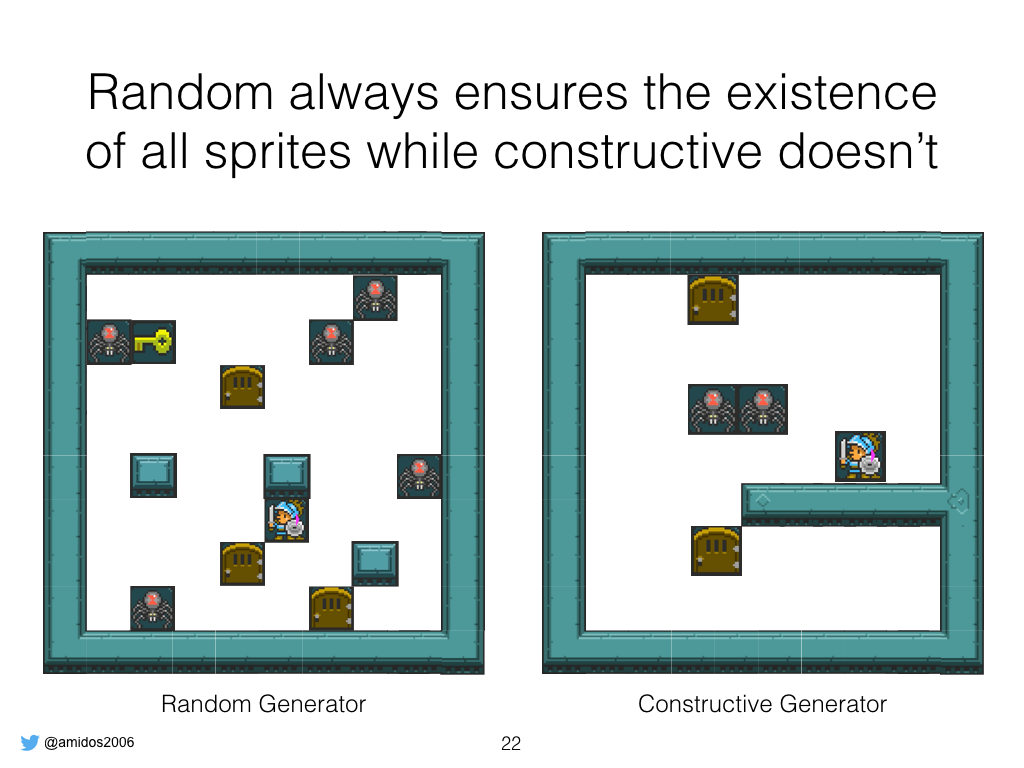

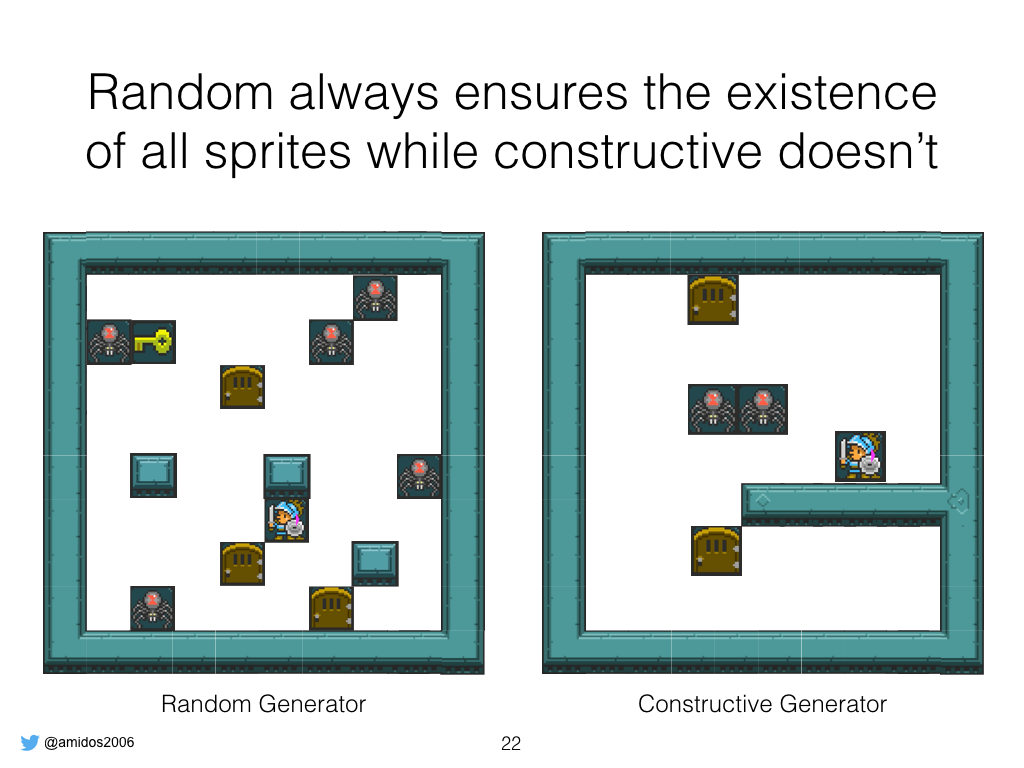

One of the main reason we think is people can’t differentiate between constructive and random but random always ensure all sprites there which make its levels playable while constructive although it looks better sometimes it misses a key or something.

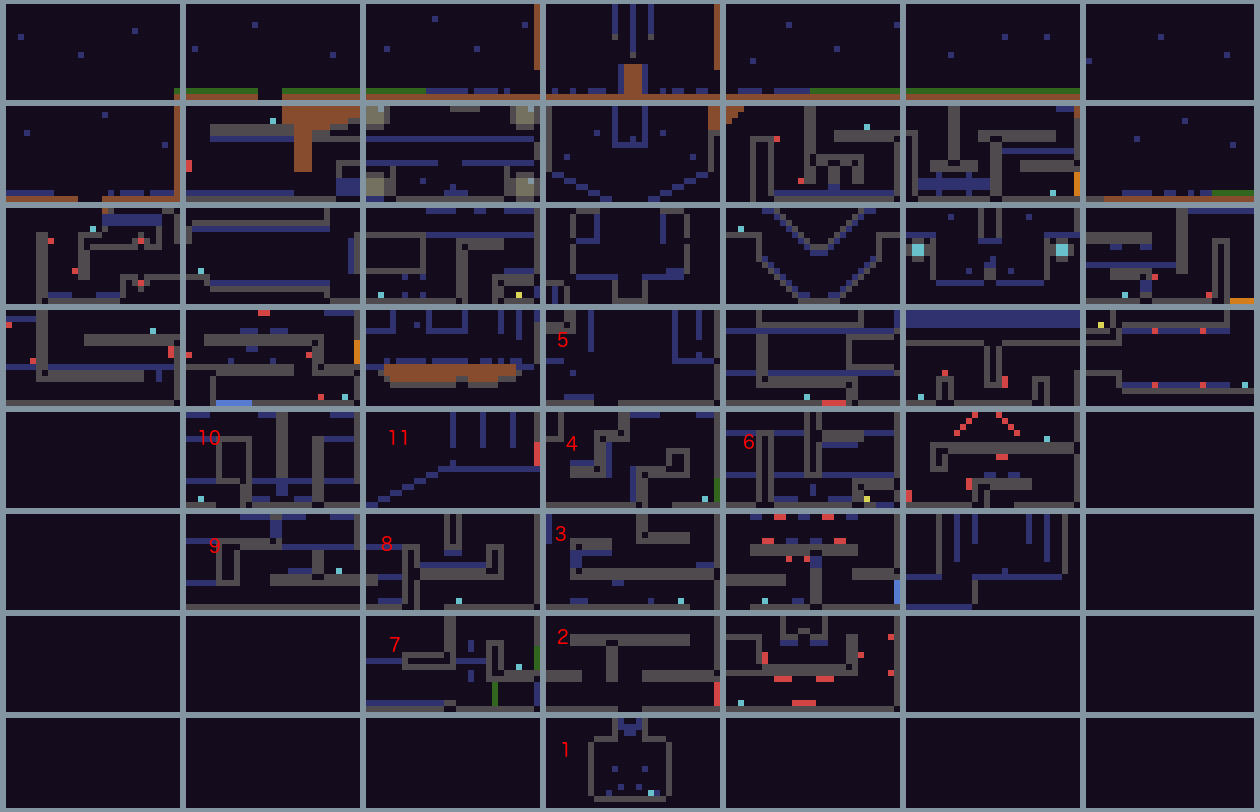

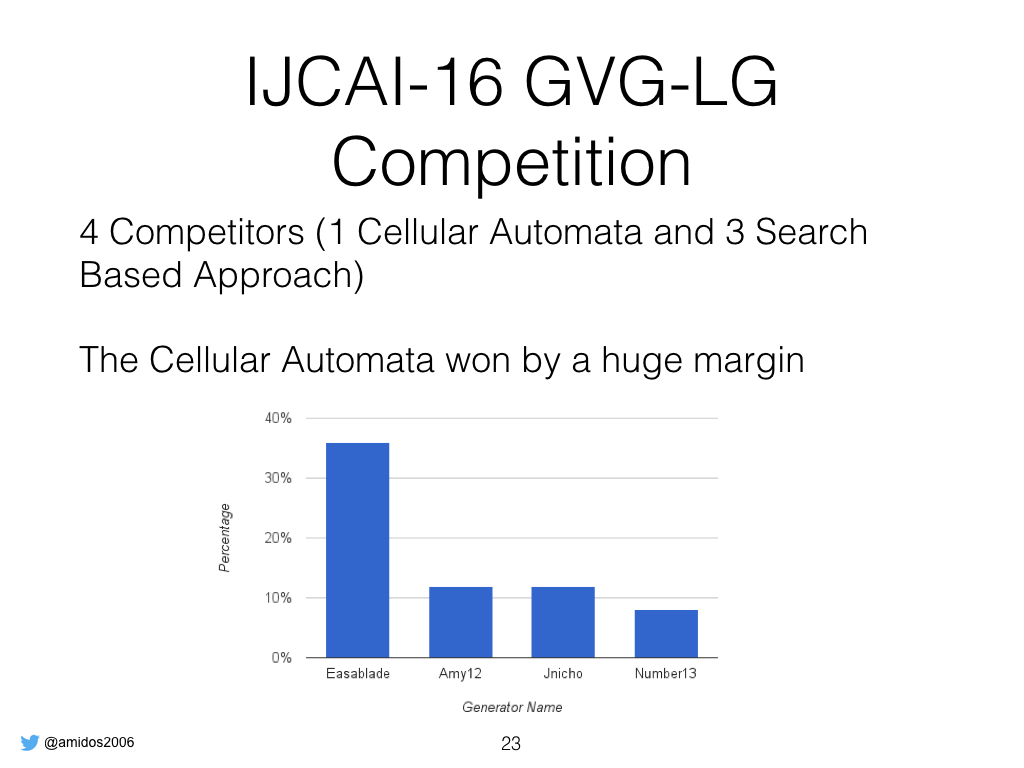

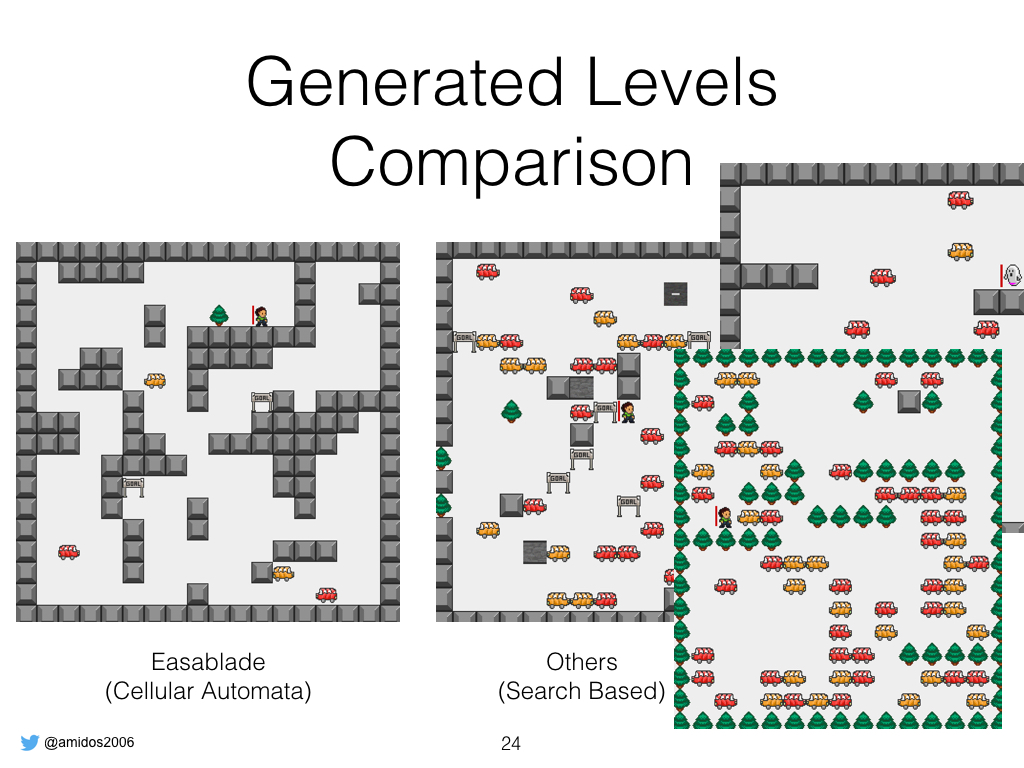

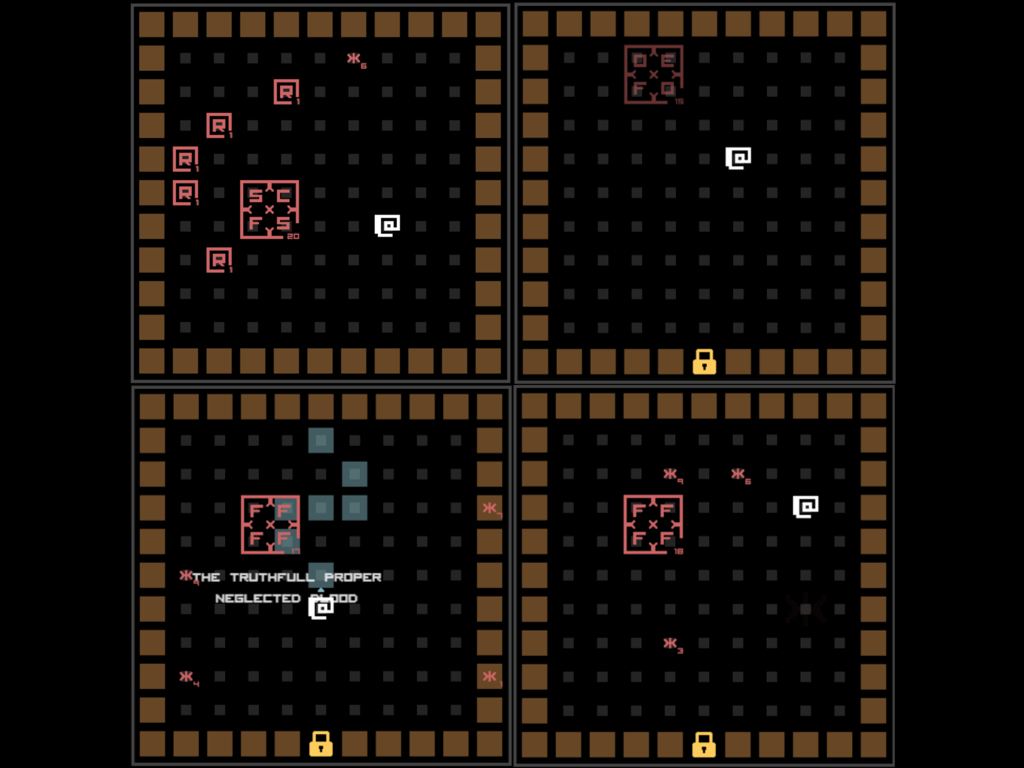

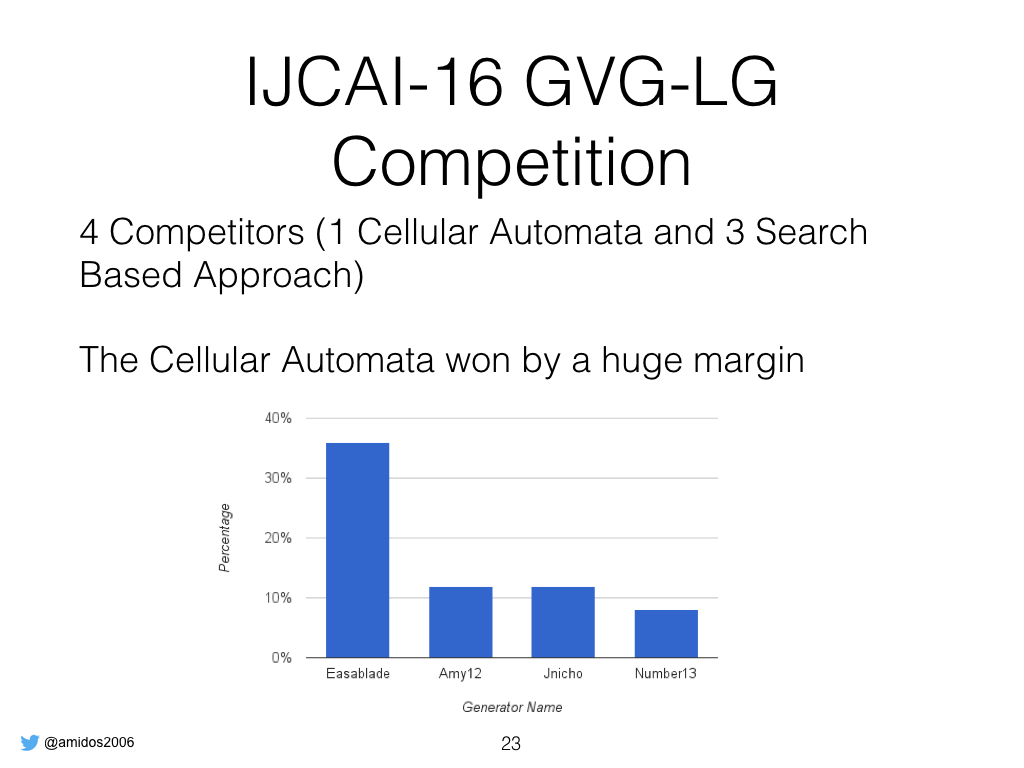

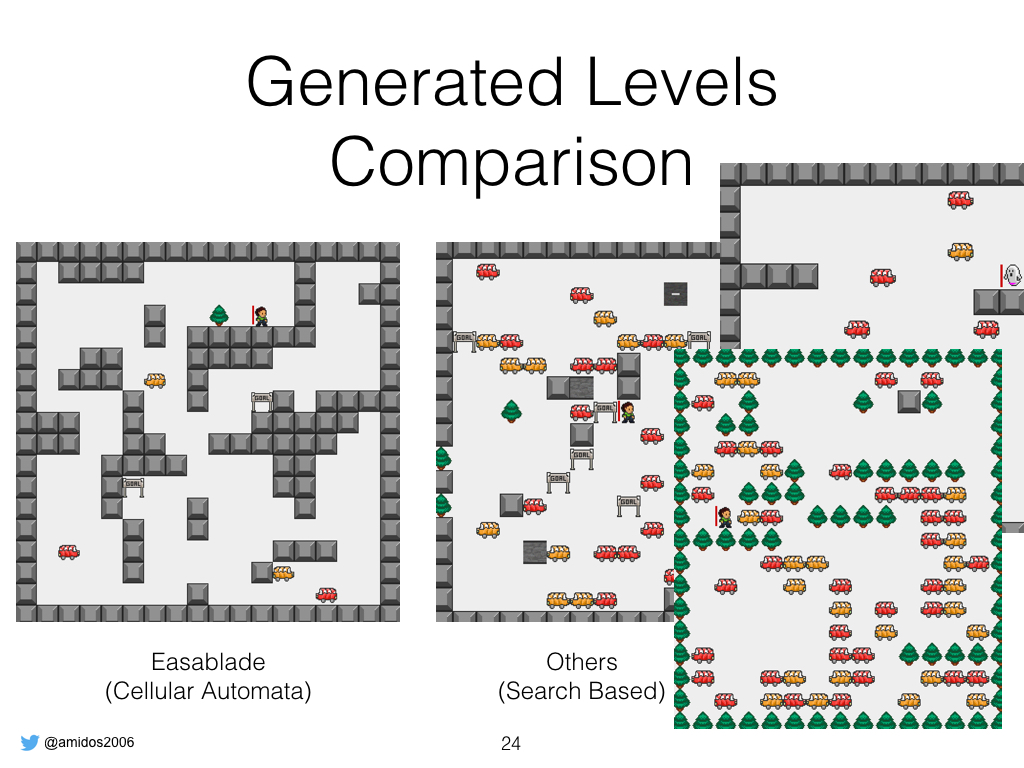

We ran a level generation competition one week ago, we had 4 competitors (1 based on cellular automata, 3 based on search based approaches)

It was simple because the cellular automata didn’t put tons of objects in the level which made it somehow playable while all the others overloaded the levels with tons of object so players die insatiately

Our future work, is enhancing search based generator to get better designed levels, enhance the framework based on competitors feedback, rerunning the competition again and encouraging more people to participate.

That’s it, Thanks.

That’s it for now.

Bye, Bye