Hello everyone,

I attended IRDC last weekend. There was a talk by Brett Gildersleeve (the developer of Rogue Space Marine and Helix). The talk is called Real Time Synchronous Turn Systems in Roguelikes. It’s about analyzing the current turn base systems in Roguelike and comparing it to his game Rogue Space Marine. This talk inspired me to write about different systems and which games use which. His talk was astonishing but missing lots of ideas that can be done (It just covered the classic stuff). Here is the ideas about the systems:

Category Archives: Research

Different Time Systems

GECCO16: General Video Game Level Generation

Hello everyone,

This is my talk in GECCO16 for our paper “General Video Game Level Generation“.

Hello everyone, I am Ahmed Khalifa a PhD student at NYU Tandon’s school of engineering and today I am gonna present my paper General Video Game Level Generation

We want to design a framework that allows people to develop general level generator for any game described in video game description language.

So what is level generation? It using computer algorithm to design levels for games. People in industry have been using it since very long time. At the beginning the reason due to technical difficulties but now to provide more content to user and it enables a new type of games. The problem with level generation is all the well known generators are designed for a specific game. So it depends on domain knowledge to make sure levels are as perfect as possible. Doing that for every new game seems a little exhaustive so we wanted to have on single algorithm that can be used on multiple games. In order to do that we need a way to describe the game for the generators.

IJCAI16 Talk: Modifying MCTS for Humanlike Video Game Playing

Hello everyone,

Ages since last post 😀 on Thursday July 14th I gave a talk about my paper “Modifying MCTS for Humanlike Video Game Playing” with Aaron Isaksen, Andy Nealen, and Julian Togelius at IJCAI16. Thanks to Aaron, he captured a video of my talk. Here is it:

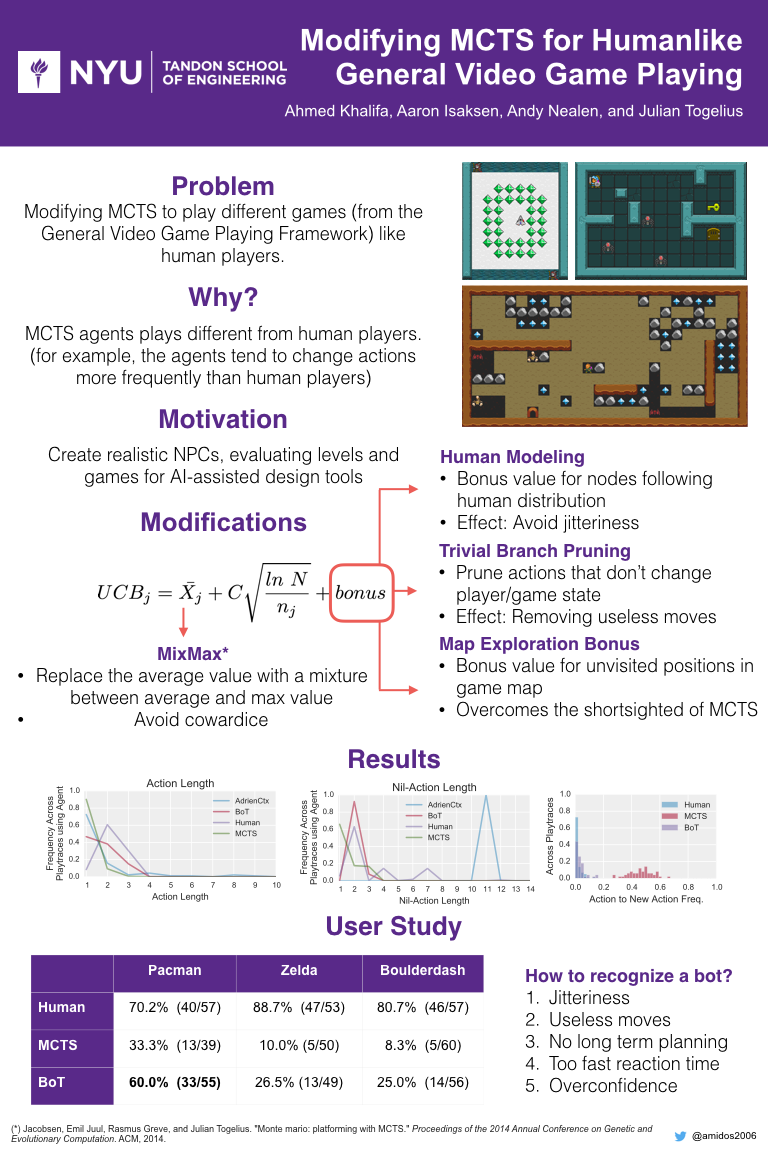

Also we did a poster for the conference which looked amazing. Here is the poster:

If the video is not clear, I am posting the slides here with my comments:

Hello everyone, I am Ahmed Khalifa, PhD student at NYU Tandon’s School of Engineering. Today I am gonna talk about my paper “Modifying MCTS for Humanlike Video Game Playing”.